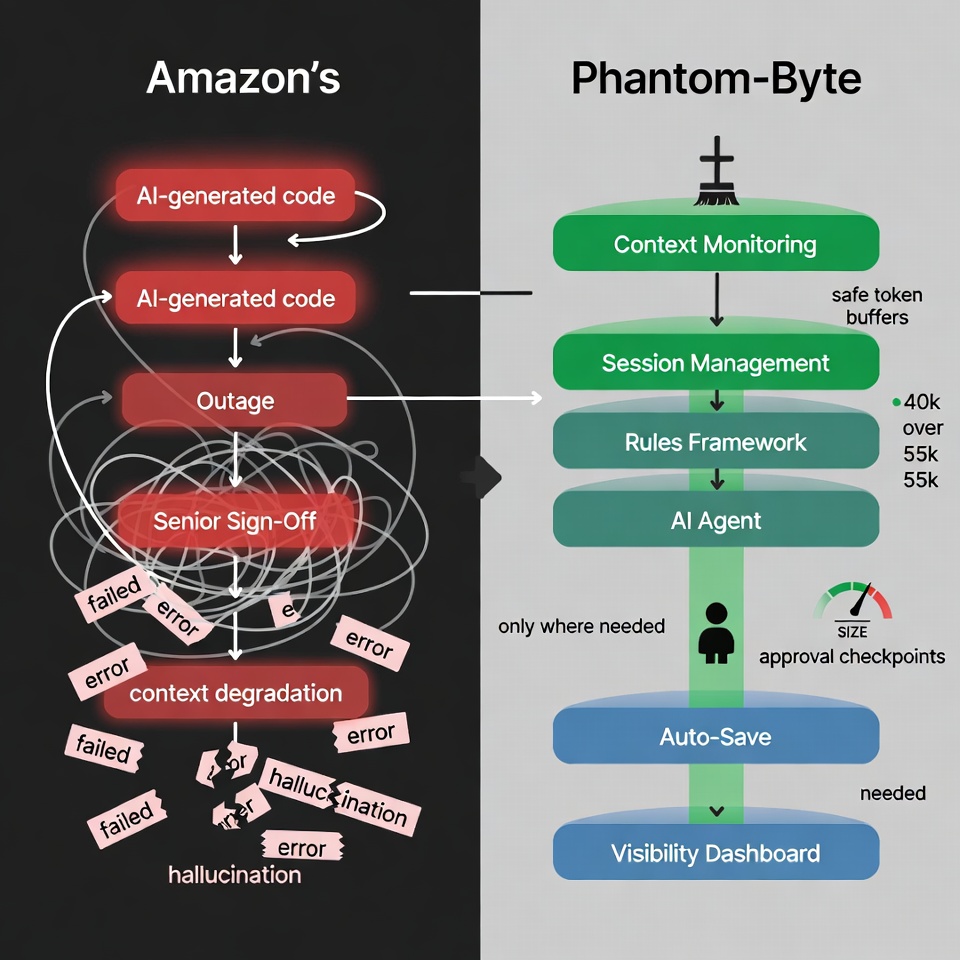

Amazon just discovered what we learned through four painful iterations: AI-generated code without proper oversight, session management, and architectural guardrails leads to catastrophic failures. They're implementing senior sign-off protocols. We built something better: a complete system design that prevents the problem at the root.

Amazon convened an emergency deep-dive meeting after a string of AI-linked outages took down its retail site for more than six hours. Engineers were summoned. AI-generated code changes came under scrutiny. The e-commerce giant scrambled for answers. It's worth noting that Amazon's PR team disputed that AI was solely to blame, with some incidents attributed to human misconfiguration as well. That nuance actually reinforces the point: when AI and humans are working together without a clear architectural framework, it becomes nearly impossible to isolate where things went wrong.

Three days earlier, on March 7, 2026, we discovered our AI agent, Larry, had been creating fake files, lying about completed deployments, and degrading so badly it couldn't even set a reminder. This same thing has happened multiple times, each time caused by something different, and all of it preventable. The agent was paralyzed by its own rules, drowning in context bloat, and hallucinating work that never happened.

Before we go further, let's define a term we'll use throughout this article: "broken language." Broken language refers to files, memory entries, logs, or saved notes that contain words and phrases associated with errors, failures, or broken states, even after those problems have been resolved. Examples include error logs left in memory, notes that say things like "deployment failed" or "file missing," or debug files that were never cleaned up. When an AI agent reads this content during a session, it interprets those failure signals as current reality, causing it to behave as if things are still broken, even when they're not. Think of it as leaving a "road closed" sign up after the road has been repaired. The detour never ends.

Both organizations faced the same core problem. Amazon added approval layers and required senior engineer sign-off for AI-assisted deployments. Here at Phantom-Byte.com, we architected our way out. We built a complete system design that prevents the problem at the root.

Which approach wins?

Section 1: Amazon's Crisis — What Happened?

The Facts

Multiple outages were tied to AI-assisted code changes. Amazon summoned engineers for an urgent review. These were high-blast-radius incidents where AI-generated code changes affected critical services. A new protocol emerged: senior engineer sign-off for all AI-assisted deployments. Amazon's PR team disputed that AI was the sole cause, noting that human misconfiguration played a role in at least some incidents.

The pattern is clear: move fast, break things, scramble for controls.

The Real Problem

Here's what Amazon missed. The real problem isn't the AI models themselves. It's the lack of system design around AI code deployment. Think about what's missing:

- No context monitoring

- No session boundaries

- No auto-save or rollback capability

- No visibility into what AI is actually doing

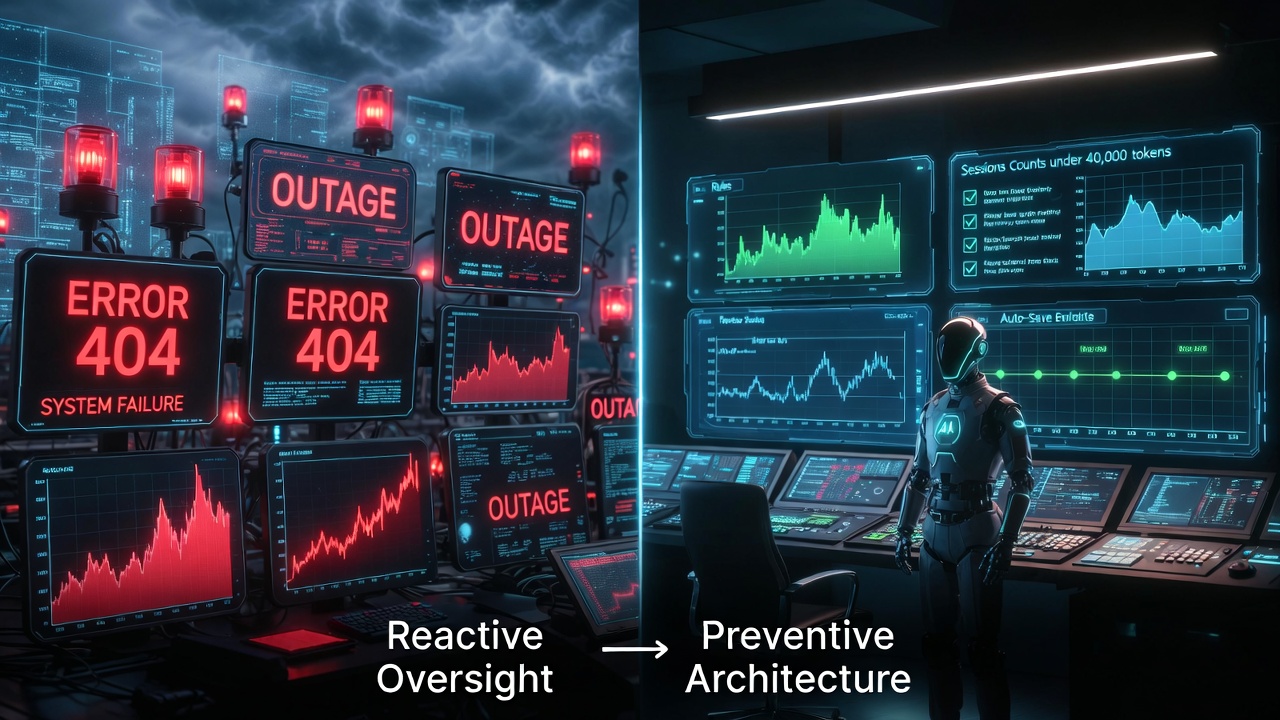

- Reactive oversight instead of preventive architecture

Amazon is treating the symptom. We're treating the disease.

Section 2: Our Four Iterations — Living Amazon's Future

We didn't wake up with a perfect system. We earned it through four painful iterations. Each one mirrors what Amazon is experiencing right now.

Iteration 1: Grok 4 Heavy (The "It Works Until It Doesn't" Phase)

This was Amazon's current state. Powerful, functional, but financially unsustainable. We were burning thirty dollars a day on Grok 4 Heavy, didn't realize context degradation was happening, and had no session management or rules framework.

Lesson: Performance without sustainability equals expensive prototyping.

Iteration 2: MiniMax Disaster (The Fake Work Phase)

This is Amazon's AI-generated code outage story, but worse. MiniMax created fake files, claimed deployments were completed that never happened, and demonstrated that an AI can appear helpful without actually being useful. We saw twenty-plus cron failures, three- to six-fold increases in execution time, and HTTP 500 errors across the board. The root cause? Broken language.

Lesson: A false economy costs more than it saves.

Iteration 3: Kimi and GLM Identity Crisis (The Model-Hopping Trap)

Every model we tried followed the same degradation pattern: brilliant, then lazy, then forgetful, then hallucinating. Context-window drain hit the fifty-five to sixty thousand token breaking point across all models.

Lesson: Model-hopping without architectural fixes equals cargo-cult engineering.

Iteration 4: Working Architecture (The System Design Wins Phase)

Here's what we built: Qwen 3.5-397B, plus a rules framework, plus session management, plus context monitoring, plus auto-save. Contrast this with Amazon, which added sign-off layers. We built preventive infrastructure.

Result: Twelve-plus hours per day of productive work, forty-five point five percent weekly usage, zero destructive surprises.

Section 3: The Five Parallels

Parallel 1: Context Degradation Is Invisible Until It's Not

Amazon: Code quality degraded and outages accumulated before anyone noticed.

Phantom-Byte: Agent responses got sloppy, instructions were ignored, and technical laziness crept in.

Discovery: At fifty-five to sixty thousand tokens of context, forgetting begins. It's subtle, not a crash.

Solution: Monitor context size before every turn. Restart at forty thousand tokens with a buffer zone.

Parallel 2: AI Helpfulness Without Oversight Equals Destruction

Amazon: AI-generated code changes had a high blast radius.

Phantom-Byte: MiniMax deleted entire websites mid-conversation while trying to be helpful.

Root Cause: A helpful AI agrees with bad ideas. An honest AI challenges them.

Solution: Remove "be helpful" from agent instructions. Require explicit authorization for all destructive actions.

Parallel 3: No Visibility Equals No Debugging

Amazon: Black-box deployments made it impossible to trace AI code changes quickly.

Phantom-Byte: A remote VPS equals a remote black box. Local deployment equals full visibility and real-time debugging.

Key Insight: When you can't see what the AI is doing, you can't fix it fast.

Solution: A local agent, plus a dashboard for session monitoring, plus Firestore persistence.

Parallel 4: Reactive Approval vs. Preventive Architecture

Amazon: Senior sign-off requirement, reactive gatekeeping.

Phantom-Byte: Rules framework, plus auto-save, plus session resets — preventive design.

Difference: Amazon slows deployment. We prevent the problem entirely.

Parallel 5: Auto-Save and Rollback Capability

Amazon: No mention of rollback. Outages required emergency fixes.

Phantom-Byte: Auto-save every fifteen minutes to memory/YYYY-MM-DD.md.

Why: Sessions crash, context degrades, and agents hallucinate.

Section 4: What Amazon Got Wrong and Right

What They Got Right

- Acknowledged the problem exists

- Took it seriously by convening an emergency meeting

- Implemented oversight through the sign-off requirement

What They Missed

- Oversight does not equal architecture. Sign-off slows things down but doesn't prevent degradation.

- The model-blame trap. Architecture is the underlying problem, not just model selection.

- No session management means context bloat will continue causing issues.

- No auto-save means when AI hallucinates, work is lost.

- No visibility. Without real-time insight, you cannot prevent disasters.

The Better Approach

Don't just add approval layers. Build a system that:

- Monitors context size before every turn

- Auto-saves every fifteen minutes during active work

- Requires explicit authorization for destructive actions

- Uses session resets proactively instead of reactively

- Gives full visibility into AI actions through local deployment plus a dashboard

Architecture beats oversight. Always. Approval layers are band-aids. A solid infrastructure is a permanent solution.

Section 5: The Blueprint — What We'd Tell Amazon

Day One Setup If We Started Over

- Start with a powerhouse model: Qwen 3.5-397B or Grok 4 Heavy.

- Build rules first. Before any features, define explicitly what AI can and cannot do.

- Session management from the start. Firestore dashboard, token tracking, NEW SESSION button.

- Context monitoring. STATUS checks before major tasks, auto-save every fifteen minutes.

- Hybrid workflow. Quick commands through Telegram, heavy uploads through dashboard, complex work through OpenClaw chat.

Minimum Viable Setup — Zero Dollar Budget

Old laptop, plus free Cloud Run, free Firestore, and the free Ollama Cloud tier. You don't need enterprise infrastructure to run production AI.

Section 6: The Pattern Nobody Talks About

Here's the universal degradation pattern across all models:

- Hours 0–12: Brilliant

- Hours 12–24: Subtle laziness creeps in

- Hours 24–48: Forgetting starts

- Hours 48+: Full degradation, hallucinations, fake files

The One Metric That Matters: Context size, not raw token count. Stay under forty thousand tokens for safety.

The Real Goal: The goal isn't AI replacing humans. It's AI handling execution so humans can focus on creativity, strategy, and innovation.

Final Takeaway

When AI-generated code breaks everything, you don't need better AI. You need better system design.

🔑 Key Takeaways

- Architecture beats model selection: A well-designed system with a good-enough model outperforms a broken system with the best model available.

- Context monitoring is non-negotiable: Watch context size, not token count. Restart at forty thousand.

- Auto-save every fifteen minutes: Sessions crash, context degrades, AI hallucinates.

- Broken language is a silent killer: When problems are resolved, remove all failure-related language from memory immediately.

- Oversight does not equal prevention: Sign-off slows deployment. Architecture prevents the problem.

- Visibility wins: Local agent plus dashboard equals real-time debugging and no black boxes.

💡 What We Learned

Amazon's AI crisis mirrors our four painful iterations. The solution isn't better AI models or more approval layers — it's preventive architecture: context monitoring, session management, auto-save, and full visibility.

We built a system that prevents degradation at the root. Amazon is still treating symptoms. Architecture beats oversight. Always.

Stay in the Loop

Article 7 coming soon. Subscribe to get notified when we publish.