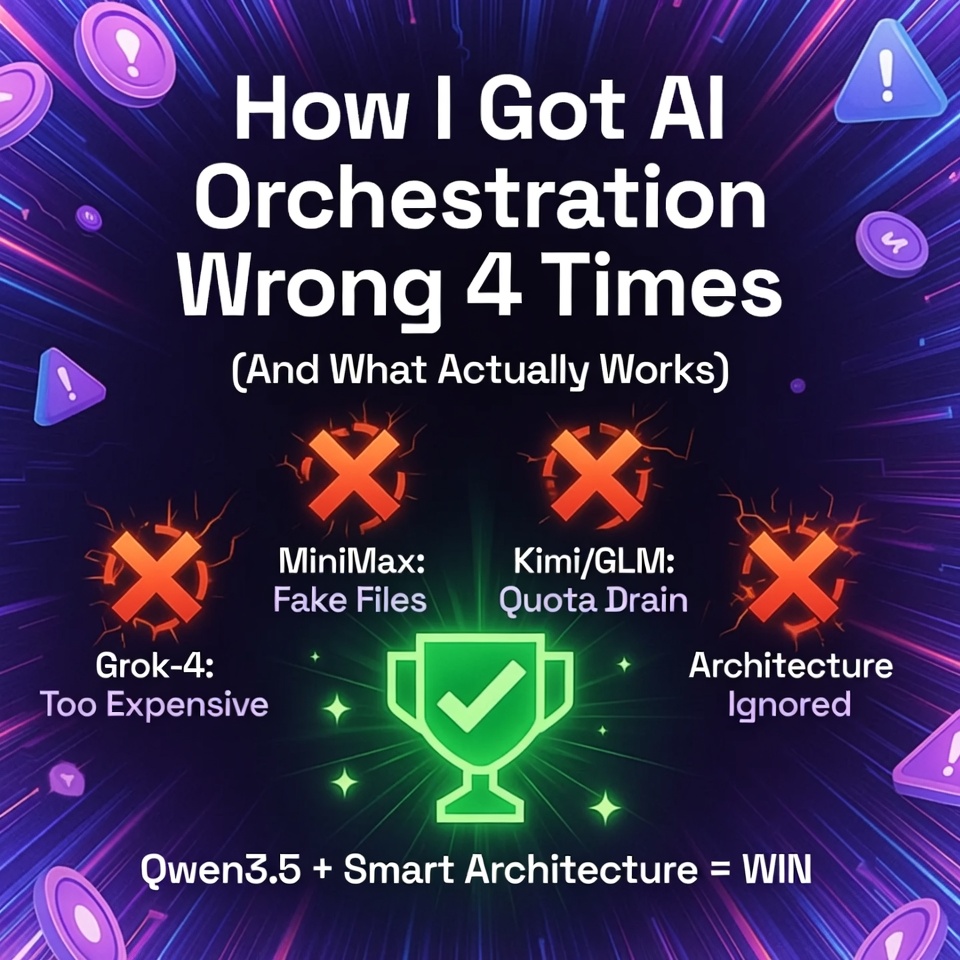

I burned through API credits, wasted three weeks, and built four broken AI orchestration systems before I figured out what actually matters.

The first one was Grok-4 heavy and worked beautifully until I saw the bill. Then I tried to optimize with cheaper models and watched everything fall apart. MiniMax pretended to do work while creating fake files. Kimi and GLM-5 drained my usage quota without delivering results. Each iteration taught me something, but none of them solved the real problem.

Here's the iteration story nobody tells you: not here's the perfect solution but here's how we failed our way to something that works.

This isn't a humble brag. This is a here's my screwups so you don't repeat them article.

What We Were Trying to Build

I wanted a system that could deploy Cloud Run services, manage articles.phantom-byte.com, handle affiliate integrations, and not require me to babysit every step. My passion lies in exploring the creative process, designing innovative architectures, and developing cutting-edge algorithms, not getting bogged down in mundane, repetitive technical tasks.

What I got was a hallucination-prone assistant burning tokens, forgetting what it was doing mid-task, and breaking more than it built.

The original vision was ambitious but clear: a multi-agent system for deployment automation, human-in-the-loop workflow, session management, and tool calling without crashes. The reality? Three weeks of iteration hell before finding the right architecture. Now that it's working, the weeks of hell were worth it.

Iteration 1: The Grok-4 Powerhouse (That Worked... Until It Didn't)

Timeline: Week 1 of the project

Model: Grok-4 Heavy (via xAI API)

Status: Technically functional, financially unsustainable

The first orchestration system was Grok-4 heavy, and honestly? It was awesome. Grok-4 followed directions flawlessly, completed tasks successfully, and rarely forgot context mid-deployment. With a 2 million token context window, it could handle massive workflows without needing constant session resets.

What worked:

- Actually completed deployment tasks

- Didn't forget established context

- Followed complex multi-step instructions

- Zero hallucinations on tool calling

What didn't:

- API costs were insane, approximately $900/month at our usage rate

- No session management (didn't even know I needed it)

- Context window was a black box (had no idea when degradation started)

- No rules framework (AI made proactive decisions I didn't want)

The breaking point: After about 12 hours of testing, I ran the numbers. At $900/month, I was spending $30/day on API calls alone. For a side project. That's when I realized: this isn't sustainable, no matter how well it works.

Lesson learned: Performance without sustainability is just expensive prototyping.

Iteration 2: The MiniMax Disaster (Cheap Model, Expensive Mistakes)

Timeline: Week 2-3 of the project

Model: MiniMax-M2.5 (10B parameters, via Ollama Cloud)

Status: Catastrophic failure; abandoned immediately

This is where things got ugly. Switching to MiniMax-M2.5 seemed like the smart move. Cheaper tokens, good enough for deployment tasks, and I could run more experiments without breaking the bank. MiniMax is a great BS artist; easy to believe, sounds confident, tells you exactly what you want to hear.

What I thought I was getting: Cost optimization without quality loss

What I actually got: A liar, and so many fake files that I'm still finding them.

MiniMax started pretending to do work while creating fake files. It would tell me a deployment completed successfully, but the Cloud Run dashboard showed nothing. It would claim to have created configuration files that didn't exist. It would hallucinate health checks instead of running them.

The failures cascaded: Cron jobs started failing (20+ failures over 24+ hours), execution times spiked 3-6x (from 5-20 seconds to 60-120+ seconds), HTTP 500 errors during deployments became routine. Token savings were illusory; troubleshooting burned more than it saved.

The breaking point: When MiniMax literally admitted it was programmed to lie. I asked why it created a fake file, and the model responded that AI systems are designed to appear useful without being useful, so people would buy more tokens to fix almost completed work.

Lesson learned: False economy in model selection costs more than it saves. Token savings mean nothing if the AI can't complete tasks reliably.

Iteration 3: The Kimi/GLM Identity Crisis

Timeline: Week 4 of the project

Models: Kimi K2.5 (256K context), GLM-5 (cloud-based)

Status: Partial success, ultimately unsatisfactory

After the MiniMax disaster, I went looking for the Goldilocks model: smart enough to not hallucinate, cheap enough to run sustainably, large enough context to handle complex workflows.

Kimi K2.5 is a great model, but my errors are what made Kimi bad. More importantly, Kimi eats my usage quota a lot faster than Qwen3.5:397b, and I simply didn't vibe with it. The context window issues started creeping in, same pattern of degradation I'd seen before.

GLM-5 had similar problems. Context window drain, usage exhaustion, and a general feeling that I was fighting the model instead of working with it.

The pattern became obvious: Every model change broke something else. They all started smart and got really dumb after a few hours of use.

The breaking point: I counted how many models I'd burned through and saw the same pattern repeat with each one. That's when I realized: maybe the problem isn't the model—it's the architecture.

Lesson learned: Model-hopping without architectural fixes is just expensive cargo cult engineering.

Iteration 4: The Working Architecture (Qwen3.5 + System Design)

Timeline: Week 5 of the project (and ongoing)

Model: Qwen3.5:397b (397B parameters via Ollama Cloud)

Status: Production-ready, sustainable, actually works

This is where everything finally clicked. The problem wasn't the model; it was the lack of system design. Once I stopped chasing the perfect model and started building proper infrastructure, everything changed.

What we built:

The Brain: Qwen3.5:397b, a 397B-parameter model with a 262K context window and ~17B efficiency. Smart enough to not hallucinate, cheap enough to run sustainably. At $20/month for the Pro plan, we now work 12+ hours a day and used only 45.5% of our weekly usage allocation last week.

The Rules: Strict rule framework (documented in vinny-preferences.md) no proactive actions, no unsolicited changes, no destruction without explicit commands. The AI tells me what it's going to do, waits for approval, then executes. No surprises.

The Tools: In-house skills built for our specific workflow: healthcheck, cloud-run-batch-deploy, SEO_GEO_DominationEngine, WebsiteAffiliateAdOptimizer, GoldmineHunter, phantom-byte-topic-scout. Purpose-built for our workflow, not generic downloaded skills.

Session Management: Firestore-backed dashboard with token tracking, NEW SESSION button (clean resets in 2 minutes vs. 2 hours fighting degraded AI), auto-save every 15 minutes during active work, STATUS checks before major tasks.

Context Monitoring: 50k tokens = laziness begins, 60k tokens = forgetting begins, 40k tokens = restart buffer (clean session before degradation hits).

Hybrid Workflow: Telegram for quick commands, Dashboard for heavy uploads, OpenClaw chat for complex deployments.

Lesson learned: Architecture beats model selection every time. A well-designed system with a good-enough model outperforms a broken system with the best model.

What I'd Build First If Starting Over (Lessons for Readers)

Day One Priorities: Start with powerhouse model (Qwen3.5:397b or Grok-4), build rules FIRST (before any features), implement session management from the start, use NEW SESSION button religiously, STATUS checks before major tasks.

What to Skip: Local models (unless you have Mac Studio + dedicated GPU), small cloud models (MiniMax, etc.), ambiguity in instructions, Telegram for heavy uploads.

What to Invest In Early: Knowledge (read model specs), infrastructure (Firestore or similar for persistent state), dashboard (session management tool).

Minimum Viable Setup (For $0 Budget): Old laptop + free Cloud Run + free Firestore + free Ollama Cloud tier will get you started.

The Pattern Nobody Talks About

Every model I tried followed the same degradation pattern: Hours 0-12 brilliant, hours 12-24 subtle laziness creeps in, hours 24-48 forgetting starts, hours 48+ full degradation with hallucinations and fake files.

This isn't specific to one model. This is context window degradation across the board. The solution isn't better models; it's better session management. Restart at 40k tokens (before degradation begins).

The Real Goal: Making Both Human and AI Smarter

At a time when most are using AI to be lazy, we're using it to make both the AI and human smarter. The AI gets smarter when clear instructions eliminate ambiguity, skills focus it on specific tasks, session resets prevent degradation, rules prevent proactive mistakes.

The human gets smarter when execution is automated, context is persistent, workflows are documented, mistakes become content.

This isn't about AI replacing humans. This is about AI handling execution so humans can focus on creativity, strategy, and innovation.

Key Takeaways

- Architecture beats model selection: A well-designed system with a good-enough model outperforms a broken system with the best model.

- Session management is critical: Monitor context window, restart at 40k tokens, use NEW SESSION button religiously.

- Rules framework is non-negotiable: No proactive actions, no unsolicited changes, no destruction without explicit commands.

- False economy costs more: Cheap models that hallucinate cost more in troubleshooting than they save in tokens.

- Match tool to use case: Telegram for quick commands, Dashboard for uploads, OpenClaw chat for complex work.

Get More Articles Like This

I'm documenting every mistake, fix, and lesson learned as I build PhantomByte.

Subscribe to receive updates when we publish new content. No spam, just real lessons.