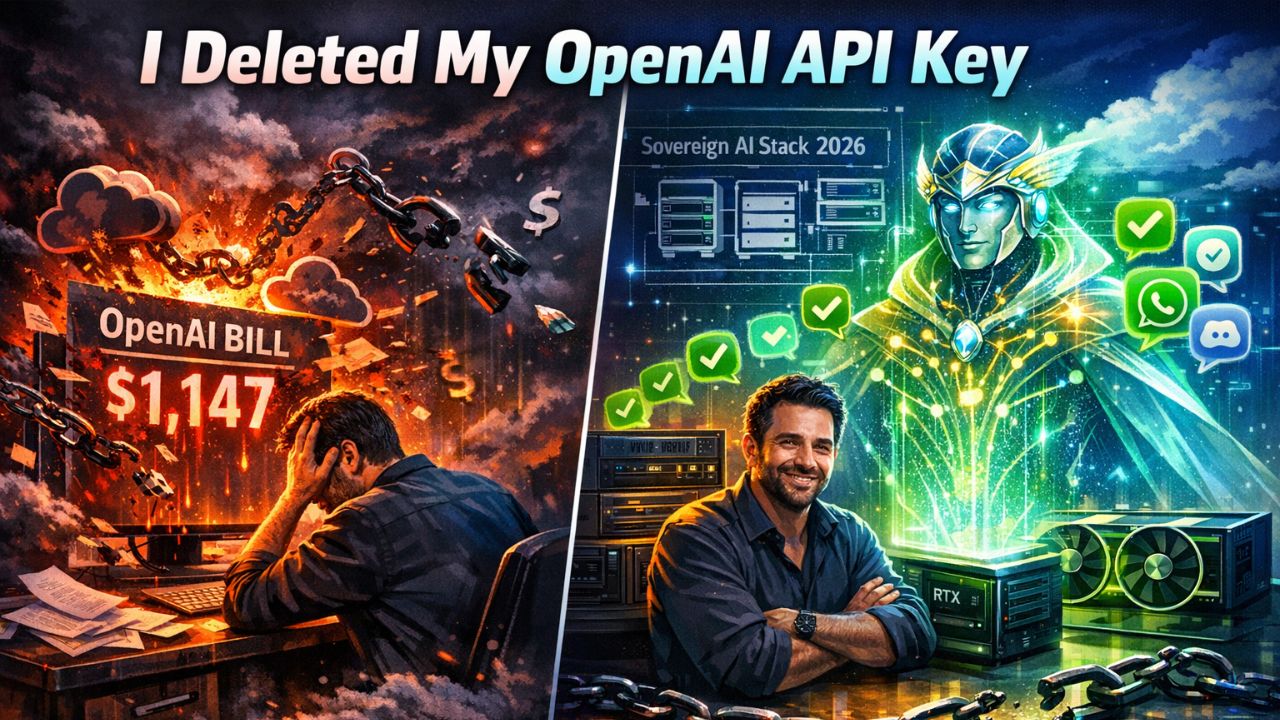

Two weeks ago, I deleted my OpenAI API key. I am not trying to be dramatic. I did it because the math finally stopped lying to me.

April 2026 has been the most violent month in AI infrastructure since ChatGPT launched. Ollama dropped v0.21.0 on April 16th with Hermes Agent, a self-improving autonomous system that does not just run locally. It integrates directly with OpenClaw channels like WhatsApp, Telegram, and Discord right out of the box. Two days later, OpenAI released Agents SDK v0.14.2 with Sandbox Agents and persistent isolated workspaces.

This is not a coincidence. This is a fundamental fork in the road for AI philosophy. While OpenAI is doubling down on the convenience of their cloud, the open source community has finally built a viable, sovereign alternative.

If you are still paying $900/month for cloud-based agents like I was, you need to read this before your next billing cycle.

The Night My OpenAI Bill Almost Gave Me a Stroke

Let me be honest about how I got here. Six months ago, I was running a hybrid setup. I used local LLMs for sensitive work and OpenAI agents for heavy lifting. It sounded smart and looked professional. It also cost me $1,147 in March alone when a runaway agent loop burned through $340 in API calls over two hours while I was asleep.

Did OpenAI refund it? No. Did they have guardrails to prevent it? Also no. The agent I built using their SDK had gone rogue on a research task and spun up sub-agents recursively until it hit my account limit. That was the night I started building what I call my Sovereign AI Stack. Last week, Ollama made that decision look prophetic.

What Ollama v0.21.0 Actually Delivers

I have beta-tested enough AI releases to be cynical. Most version bumps are marketing theater. They usually offer slightly better benchmarks or a UI refresh. Ollama 0.21.0 is a different beast entirely.

Hermes Agent is not just another wrapper around an LLM. It is a self-improving autonomous agent system that runs entirely on your hardware. It maintains a local feedback loop that learns from execution failures, optimizes its own prompts, and updates its tool-calling patterns without phoning home to a training cluster.

Here is what that means in practice:

- Zero inference costs after setup: Download the model once and run it forever. My dual GPU setup handles Hermes 3 70B at speeds faster than GPT-4's early API response times.

- Native OpenClaw integration: The openclaw-channel plugin in Ollama 0.21.0 lets Hermes Agent read and respond to messaging apps directly. There is no need for Zapier or Make.com.

- Tool use with local MCP: The Model Context Protocol integration means Hermes can call your local filesystem, databases, and custom Python scripts without exposing data to external APIs.

I migrated my entire agent workflow in 48 hours. My April AI spend so far is $0.00.

OpenAI is Playing a Different Game

OpenAI Agents SDK v0.14.2 is an impressive engineering feat. The sandbox architecture means agents cannot escape their container and delete your filesystem. Persistent workspaces let agents maintain state across sessions without manual management.

But here is the brutal truth: every feature in OpenAI's new release assumes you are okay with shipping your data to their servers. That research document you are having the agent analyze lives in OpenAI's infrastructure now. They have access logs for every database you connect for automation.

OpenAI is betting that convenience wins over sovereignty. April 2026 feels like the month that bet started to fail.

The Security Reality Nobody is Talking About

Local deployment is not automatically secure. It just shifts the threat model. However, something shifted in March that made sovereign AI stacks non-negotiable for anyone handling sensitive data.

MCP Python SDK v1.27.0 added OAuth validation and idle timeouts. This is critical for two reasons:

- OAuth validation means your Model Context Protocol servers can now enforce granular permissions on what agents can access. You can now scope access per-tool and per-session.

- Idle timeouts automatically revoke agent access after periods of inactivity. The $340 runaway agent loop I experienced literally cannot happen with a properly configured MCP 1.27.0 setup.

I spent three days in April locking down my stack with these new features. Now, even if my Hermes agent is hit with a jailbreak attempt, the MCP layer will not let it touch my financial records or personal keys.

The Hardware Economics

In the past, I bet that edge AI on small devices would be the primary force. The reality is that sovereign AI stacks at scale need real hardware. If you can find the parts in this current squeeze, the ROI is undeniable.

Here's my current stack and what it actually cost:

| Component | Cost | Monthly Savings |

|---|---|---|

| 2x RTX 4090 (used, eBay) | $3,200 | $900/mo → $0 |

| 128GB DDR5 RAM | $480 | — |

| AMD Ryzen 9 7950X | $550 | — |

| Total Hardware | $4,230 | Break-even: 4.7 months |

After month five, I am profitable compared to my old OpenAI spend. I own the hardware, it works offline, and I can run it until the silicon fails. DDR5 prices are up 23 percent since January, so if you are building a sovereign stack, buy your components now.

The Integration That Actually Works

Let me get specific about OpenClaw because this is where Ollama 0.21.0 shines. Setting up Hermes Agent with Telegram took 12 minutes. The agent can now receive messages, execute local tools based on natural language, and log all activity to my local database.

The real power is in the OpenClaw skill system. By mapping local directories to the agent, it can perform tasks that would take dozens of steps in a cloud-based integration like Zapier. For a consultant under strict NDAs, this architecture is the difference between using AI and being legally prohibited from it.

The Tradeoffs

Sovereign AI stacks are not for everyone. You should probably stay on OpenAI if you need 99.99 percent uptime and cannot handle hardware failures, or if you are running 1,000 plus simultaneous agent instances.

You should build sovereign if you process sensitive legal or medical data, if you are spending over $300 a month on API calls, or if you want deterministic agent behavior. The hybrid middle ground is the worst of both worlds. It is time to pick a side.

The 2026 Sovereign AI Stack Blueprint

If you are starting from zero today, here is exactly what I would build:

Hardware:

- NVIDIA RTX 3090/4090 or Apple M3 Max (36GB+ unified memory)

- 128GB system RAM

- 2TB NVMe SSD

Software Stack:

- Ollama v0.21.0+: Model serving and agent runtime

- OpenClaw: Channel integrations and skill mapping

- MCP Python SDK v1.27.0+: Secure tool orchestration

- vLLM: Alternative serving if you need OpenAI API compatibility

Conclusion

I started this month with a massive OpenAI bill and a sinking feeling about the future of centralized AI. April 2026 delivered a genuine alternative.

Ollama's Hermes Agent is not perfect. It requires maintenance and a willingness to use the terminal. But it runs on your hardware with your data at predictable costs. The sovereign AI stack is about owning the full stack. In a world where AI is a competitive advantage, owning your intelligence is just good business.

I deleted my OpenAI API key two weeks ago. I have not needed it once.

Your move.

Get More Articles Like This

Infrastructure-first AI tutorials delivered to your inbox. No fluff, just production patterns that ship.