I have a confession.

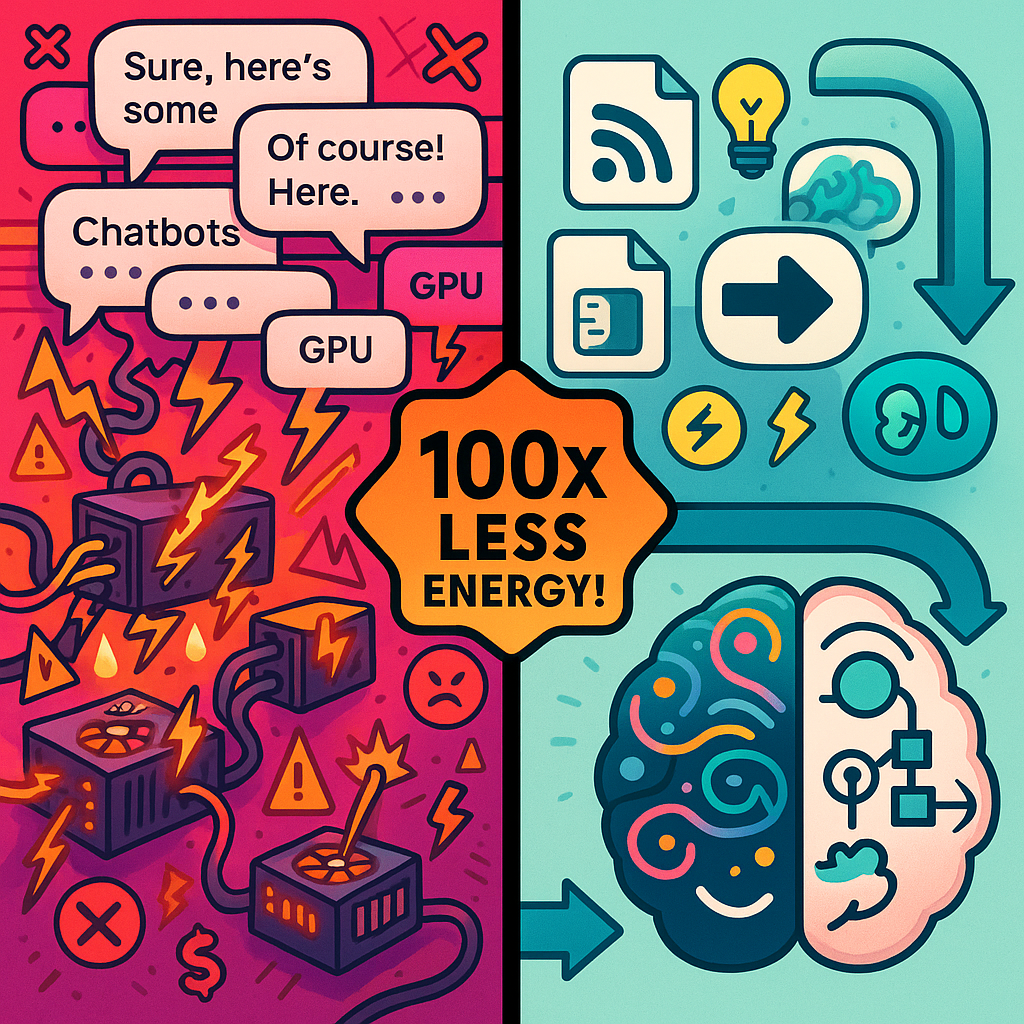

A few months ago, I was building AI agents the same way everyone else does. I threw cloud API calls at every problem like they were unlimited. I let my agents burn through tokens on tasks a simple Python script could handle in milliseconds. I built orchestration systems that were essentially chatbots talking to chatbots, each conversation burning more GPU cycles than a small village uses in a day.

I did not care about energy efficiency. I cared about shipping.

Then my cloud bill hit $847 in a single month for one agent pipeline, and I had to sit down and actually think about what I was doing. Not because I suddenly became an environmentalist. Because I was running out of money.

That desperation led me to a discovery. The AI industry's obsession with "bigger is better" is not just expensive. It is about to hit a wall that makes Moore's Law look like a speed bump. And a research team at Tufts University just proved there's a way around that wall.

The Wall Nobody Wants to Talk About

Here is the number that should terrify every AI founder: 183 terawatt-hours.

That is how much power U.S. data centers consumed in 2024, according to the International Energy Agency. That is more than 4% of the entire country's energy output. And here is what is worse: it is expected to more than double by 2030.

To put that in perspective: when you use Google, the AI summary at the top of your search results consumes up to 100 times more energy than generating the regular website listings. You wanted a quick answer? You just burned enough electricity to run a lightbulb for a day.

The industry response has been predictable: throw money at the problem. Venture capitalists have dumped over half a trillion dollars into AI startups over the last five years. But increasingly, the smart money is realizing that bigger models and more GPUs is not a sustainable answer to the energy crunch.

A report by Sightline Climate dropped a bombshell: up to 50% of announced data center projects face delays. Why? They cannot get enough power. Of the 190 gigawatts of data center capacity being tracked, only 5 gigawatts are actually under construction. About 36% of projects saw their timelines slip in 2025 alone.

The supply chain for AI infrastructure is choking. Not on chips, not on cooling systems, but on electrons.

The $500 Billion Band-Aid

Then came the news that confirmed everything.

OpenAI, SoftBank, and Oracle announced Project Stargate: a $500 billion AI infrastructure investment that includes plans for small modular reactors and dedicated nuclear energy sources to feed the beast. The industry's response to the energy wall? Not efficiency. More power.

This is not criticism. It is math.

When your business model depends on training models that consume more electricity than small countries, you build nuclear reactors. You do not wait for someone to invent a better algorithm. You pour concrete and hope the NRC moves fast.

But here is what the press releases will not say. Stargate is an admission of failure. It is the industry conceding that current AI architectures are too inefficient to scale on existing grid power. It is betting that brute force can outrun physics if you just add enough atoms.

It might work. The Stargate partners have the balance sheets to find out.

But while they are breaking ground on reactors that will not come online for years, Tufts University proved something that should terrify anyone who just spent five hundred billion on infrastructure.

The Pick-and-Shovel Play Everyone Missed

Here is where it gets interesting for anyone playing the AI investment game or building a sustainable AI business.

While everyone obsesses over foundation models and chatbot interfaces, the real opportunity might be in the energy technology powering these systems. Tech giants like Google, Meta, and Amazon are pouring billions into solar, wind, and nuclear projects. They are backing experimental battery technology like Form Energy's 100-hour storage systems. They are negotiating entirely new rate structures with utilities.

Goldman Sachs predicts AI will drive data center power consumption up 165% by 2030. That is not a trend line. That is a cliff.

The Trump administration is reportedly pressuring tech companies to build their own power sources or cover grid upgrades to prevent rate hikes. Most had already planned to do so. When your business depends on electricity that badly, you cannot afford to wait for the grid to catch up. Trump also said that if they produce a surplus of energy, they could sell it.

But here's what nobody is saying. Building more power plants is Plan B. The real innovation opportunity is making AI require less power in the first place. In my opinion, you clearly need both more energy and more efficient models.

The 100x Breakthrough Nobody Is Talking About

That brings us to Tufts University, and the research that might change everything.

A team led by Professor Matthias Scheutz at the School of Engineering has developed a proof-of-concept AI system that uses 100 times less energy than current approaches while producing more accurate results. Yes, you read that right: 100 times less energy, better accuracy.

The secret? They combined conventional neural networks with symbolic reasoning, creating what is called "neuro-symbolic AI." Instead of brute-forcing problems with massive deep learning models, they layered symbolic logic on top, similar to how humans break down tasks into steps and categories.

The results are not theoretical. They are brutal:

- 95% success rate on Tower of Hanoi puzzles, compared to 34% for standard visual-language-action (VLA) models

- 78% success rate on complex, unseen puzzles where conventional VLAs scored 0%

- Training time: 34 minutes vs. 1.5+ days for conventional approaches

- Training energy: 1% of what VLA models require

- Runtime energy: 5% of VLA consumption

This is not a marginal improvement. This is a different universe.

The research will be formally presented at the International Conference of Robotics and Automation in Vienna this June, but the implications are already clear. The AI industry has been optimizing for the wrong thing. We have been chasing scale when we should have been chasing efficiency.

How I Accidentally Stumbled Into the Same Philosophy

Here's the embarrassing part: I didn't read about Tufts and have some big revelation. I backed into this approach because I was bad at budgeting and because of a terrible JSON mistake that made me accidentally select the wrong AI model.

When that $847 bill hit, I started asking questions I should have asked before:

Why am I using an API call to scrape a website when Requests + BeautifulSoup does it in 12 milliseconds? RSS feeds are also a great tool to have in your arsenal. Using APIs just to scrape means you're basically paying for garbage data.

Why is my agent writing its own summaries when I could use a simple regex pattern to extract the key sentence?

Why am I chaining three GPT calls when one well-structured prompt would do?

I started replacing AI with code. Not everywhere, not blindly, but strategically. I built a topic scout system that uses scripts to fetch RSS feeds, parse HTML, and filter results before any AI ever touches the content. I built orchestration that reserves AI calls for actual reasoning tasks, not data retrieval.

The result? This week, we have used 26.9% of our weekly AI budget. And we have shipped more content than when we were burning 80%.

This is not virtue signaling. This is not "green AI" for moral points. This is realizing that if Tufts is right about 100x efficiency gains, the companies still burning billions of tokens on tasks Python can handle are throwing money into a fire that is about to consume them.

Why Neuro-Symbolic AI Changes the Game

The Tufts breakthrough matters beyond the energy savings. It tackles the fundamental failure mode of current AI systems: they are black boxes that hallucinate.

Here is how Professor Scheutz's team explains the problem. When a conventional VLA robot tries to stack blocks, it scans the scene, identifies objects, interprets instructions, and acts. It often fails: shadows confuse it, blocks get misidentified, towers tip over because the model lacks spatial reasoning.

These failures are not bugs. They are features of an architecture that learns patterns but cannot reason about them. The Tufts team added symbolic reasoning on top, allowing the system to break down tasks, categorize objects, and plan steps the way a human would.

The result is not just more efficient. It is more reliable. And in an era where AI agents are being trusted with real money, real decisions, and real consequences, reliability matters more than scale.

What This Means for Your AI Strategy

If you are building with AI, here are the uncomfortable questions you should be asking:

Are you using AI for everything, or for the right things?

The Tufts approach suggests a hybrid future. Neural networks for pattern recognition. Symbolic systems for reasoning and validation. Your AI agents should be surgeons with lasers, not bulls in china shops.

Are you measuring cost per task or just total spend?

That $847 bill taught me to track tokens per article, per scrape, per decision. When you see the numbers, the inefficiencies become obvious. We now run topic scouting for pennies per source instead of dollars. It also changed how I tell my agent to fetch me data. Building the right scripts can save you a lot of money.

Are you building for the current reality or the coming energy crisis?

Data center power costs are going nowhere but up. While the Stargate partners bet $500 billion on massive infrastructure, the grid is not getting cheaper. The companies that figure out efficiency now will be the ones that survive when electricity becomes the bottleneck instead of GPUs.

Are you waiting for someone else to solve the problem?

Google can afford to build nuclear reactors next to their data centers. OpenAI, SoftBank, and Oracle can afford Project Stargate. You probably cannot. The advantage goes to builders who can do more with less.

The Brutal Truth

I built inefficient AI systems because it was easier. Because everyone else was doing it. Because the cloud providers wanted me to keep burning tokens. I was comparing my spend to theirs.

The Tufts research proves there is a better way. Not a way that is coming someday. A way that works now, with numbers that do not lie.

100 times less energy. Better accuracy. Faster training.

The AI industry has been building a monster. Ever-larger models consuming ever-more power to solve problems that often do not require brute force at all.

Turns out the solution was not more power. It was better thinking.

The question is not whether neuro-symbolic AI will become mainstream. The question is whether you will still be burning tokens on brute-force solutions when it does.

FAQ: Neuro-Symbolic AI and Energy Efficiency

What is neuro-symbolic AI?

Neuro-symbolic AI combines neural networks (for pattern recognition) with symbolic reasoning (for logic and planning). This hybrid approach can solve problems more efficiently than either approach alone.

How much energy do AI models actually use?

Large language models and visual-language-action systems consume significant power. The IEA estimates U.S. data centers used 183 TWh in 2024, more than 4% of the country's total electricity. It projects that figure will more than double by 2030. A single AI search query can use up to 100x more energy than a standard web search.

Can I implement neuro-symbolic AI in my own projects?

The Tufts research is proof-of-concept, not production-ready. However, the principle applies now. Reserve AI calls for reasoning tasks. Use traditional code for data retrieval and processing.

Is energy efficiency just about cost savings?

Cost savings are the immediate benefit. Long-term, energy constraints may limit AI availability. Efficient systems will have competitive advantages as power becomes scarcer.

Why are data centers running out of power?

The surge in AI infrastructure has outpaced grid capacity. Only 5 GW of 190 GW tracked data center projects are under construction, with 36% experiencing delays due to power shortages.

What is Project Stargate?

Project Stargate is a $500 billion AI infrastructure joint venture led by OpenAI, SoftBank, and Oracle, announced in January 2025. It includes plans for small modular reactors and other dedicated energy sources to power next-generation data centers. It represents the industry's bet on solving the AI energy crisis with more power generation rather than efficiency improvements.

Get More Articles Like This

Getting your AI agent setup right is just the start. I'm documenting every mistake, fix, and lesson learned as I build PhantomByte.

Subscribe to receive updates when we publish new content. No spam, just real lessons from the trenches.