You know what hits different after reading the New Republic's latest piece? The headline reads, "The AI Industry Is Discovering That the Public Hates It." The public is not just skeptical or holding reservations. They hate it.

The industry's response has been to double down on centralization. Anthropic locked into a $100 billion deal with Amazon. OpenAI is launching GPT-5.5 with a bio bug bounty, offering a reward for finding biosafety vulnerabilities after the Florida criminal probe. The disconnect between what the industry is building and what the public will tolerate has become a chasm.

Meanwhile, r/LocalLLaMA, the 700K-strong subreddit that represents the actual AI builder community, just updated its rules to crack down on AI-generated slop. The irony is not lost on me. The people who run models locally are so sick of low-effort AI content that they are implementing minimum karma requirements to filter it out.

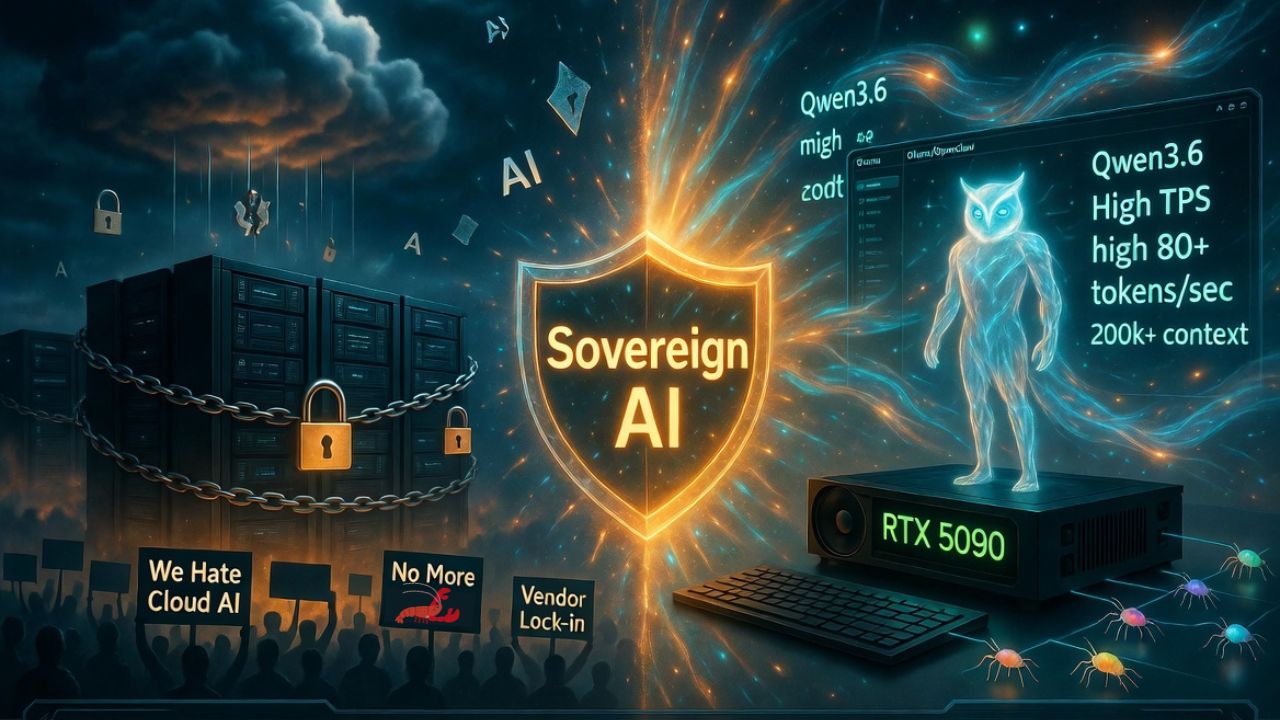

The thesis is clear: Centralization is breeding distrust, and the builder community is already voting with their infrastructure choices. Data from the last 48 hours proves the sovereign stack has arrived.

Qwen3.6-27B quantized to IQ4_XS is running 100k context on a single 16GB GPU at 21 tokens per second. vLLM 0.19 with NVFP4 quantization is pushing 80 tokens per second on a single RTX 5090 with 218k context. A developer on 4x RTX 6000 Pro cards is getting 40+ TPS and 2000+ prompt tokens/sec, noting the experience is "pretty close to Sonnet plus Claude Code."

These are not benchmarks from a lab. These are Reddit posts from people running production workloads on hardware they own. The sovereignty stack is not theoretical. It is shipping today on consumer GPUs.

The Nous Research Signal: Why Hermes Agent Matters Right Now

Nous Research just announced an AMA on r/LocalLLaMA scheduled for April 29. The team behind Hermes Agent, the open-source model that powers many local agent capabilities, is going to sit in the trenches and answer questions from the self-hosting community.

This is not a coincidence. Hermes Agent represents everything the centralized AI providers do not want you to have: a model you can run on your own hardware, fine-tune to your own specifications, and audit down to the weight level.

When tools like Ollama and OpenClaw integrated Hermes Agent support, it was not just a feature release. It was a declaration that the sovereign AI stack is production-ready.

The AMA comes at a critical moment. The Florida criminal probe into OpenAI has every developer reassessing their cloud dependency. Nous Research's open-source approach is the antidote. When you can run the same model quality as a cloud provider on your own hardware, the risk calculus shifts completely.

The Four Pillars of Sovereign AI Infrastructure

Building a sovereign AI stack is not about being anti-cloud. It is about being pro-control. Here is what the architecture looks like in practice.

1. Local Model Orchestration: Ollama and OpenClaw

Effective local model management has become the standard for a reason. You can pull models, manage versions, and switch between quantized variants entirely locally and under your control.

While Ollama remains a popular choice for straightforward model management, OpenClaw has emerged as a significant alternative for developers seeking a different approach to local-first agentic frameworks. Both platforms now provide the infrastructure needed to leverage the Hermes Agent integration, ensuring that you are not sacrificing capability for sovereignty.

The performance data from this week alone is staggering:

- Qwen3.6-27B (IQ4_XS): 100k context on a single 16GB GPU at 21 tokens per second.

- vLLM 0.19 (NVFP4): 80 tokens per second on a single RTX 5090 with 218k context.

- RTX 6000 Pro (4x): Delivering 40+ TPS and 2000+ prompt tokens/sec.

The cost difference is significant. A local-first setup can cost as little as $47 per month, compared to $10,000 per month for an equivalent cloud-only stack. Cloud model pricing assumes you will accept vendor lock-in as the cost of business. Sovereign infrastructure reveals that lock-in was always a choice.

2. Cloud Models as Specialists, Not the Backbone

This is where most people get sovereign AI wrong. They think it means you can never touch a cloud API. That is not practical. The right architecture uses cloud models for specific tasks where local models genuinely struggle:

- Kimi K2.6: For deep research and complex reasoning.

- GLM-5.1: For structured analysis tasks.

- Cloud fallbacks: For niche capabilities or extreme edge cases.

The key is that these are supplements to your local stack, not the foundation. You should be able to survive losing any single provider without your workflow breaking. That is sovereignty.

3. Multi-Agent Orchestration as the Default Architecture

The monolithic model approach is fading. Swarm architecture is provably superior for complex tasks, boasting 3,600 searches per month on "multi-agent orchestration" and growing 340% year over year with low competition.

Moonshot AI's Kimi K2.6 demonstrated agent swarm scaling to 300 sub-agents and 4,000 coordinated steps. That is not theoretical. That is the benchmark of what is possible when you stop treating AI as a single giant model to query and start treating it as an orchestration problem.

The biological analogy is exact. Ant colonies solve complex problems without a central brain. Specialized models handle their domains, and orchestration handles the complexity. You do not need gigawatts of compute; you need smarter architecture.

4. Tool Gateway for API Redundancy

When a cloud provider's legal problems become your business continuity risk, you need escape hatches. A tool gateway with multiple cloud providers and automatic failover means no single provider can kill your workflow.

This is not just theoretical. The Vercel breach via a compromised third-party AI tool last week is a concrete example. Your development platform is only as secure as its weakest AI integration. When you can route around failures, whether they are legal, technical, or financial, you own your continuity.

Bonus: The Defensibility Signal

Reddit researchers just published a paper on arXiv (2604.20972) introducing the Defensibility Index, a framework for evaluating AI decisions by whether they follow your rules, not by whether a human agrees with them. They validated it on 193,000+ Reddit moderation decisions, achieving 78.6% automation coverage with 64.9% risk reduction.

This is the audit infrastructure sovereign AI needs. Cloud providers evaluate by their own definition of quality. Self-hosted AI needs defensibility metrics: the ability to prove every decision follows your specific governance rules.

The RAM Shortage Timeline: Why You Should Build Now

Industry forecasts project RAM shortages lasting until 2030 due to AI demand. There are 5 gigawatts of Trainium chips locked into Anthropic's AWS deal. Nuclear reactor startups are struggling to retain leadership, and data center projects are facing community resistance across the US.

The scarcity is not accidental; it is a control mechanism. When hardware is scarce, dependency is created. When dependency exists, pricing power follows. The developers who will survive the next three years are the ones building efficient, sovereign infrastructure now, before the scarcity premium kicks in.

Quantized models on consumer hardware do not need gigawatts. A properly configured local stack on a workstation often outperforms cloud APIs for the majority of real-world tasks. The specialist work can hit cloud endpoints with proper failover. That is the stack.

What the Public Backlash Means for Your Stack

The New Republic piece captures something the industry has been ignoring: The public has already decided. Over 60% of both Republicans and Democrats support government regulation of AI. Social media hostility toward AI executives is intensifying.

When the regulatory hammer comes down, the developers who own their infrastructure will be insulated. This is not because they have better insurance, but because they have nothing to subpoena. Your data does not leave your hardware. Your chat logs are not on a provider's server waiting for a prosecutor's request.

The Florida criminal probe isn't just a warning. It is the opening bell. The question isn't whether regulation will reshape the AI landscape. It is whether your stack can survive the transition.

Building Your Sovereignty Stack: A Practical Timeline

Week 1: Audit

Audit every AI dependency in your production stack. Document what data you send, what you get back, and where it is stored.

Week 2: Identify Local Workflows

Identify the workflows that should be local. Start with anything involving PII, legal documents, medical data, or sensitive business information.

Week 3: Set Up Local Orchestration

Set up local orchestration using Ollama or OpenClaw with Hermes Agent. Run your identified workflows and measure the latency and quality delta against your cloud provider.

- A 16GB GPU can run Qwen3.6-27B at IQ4_XS with 100k context.

- A 5090 with vLLM 0.19 and NVFP4 will match cloud API speeds.

Week 4: Implement Orchestration

Implement orchestration with n8n or similar tools. Build the failover patterns. You need the ability to walk away from any provider without rebuilding your stack.

The Bottom Line

The public already rejected cloud AI. The builders at r/LocalLLaMA are already enforcing quality standards. Nous Research is doing an AMA because the community wants to hear from the people building the open-source alternative. And the industry is spending $100 billion to centralize control.

The choice is stark. Accept the liability of centralized infrastructure, or build the stack that insulates you from it. The AMA on April 29 is a signal. The sovereign AI community is growing, organizing, and producing results.

The public hates what the industry is selling. But they will respect what you build yourself.

Get More Articles Like This

Building sovereign AI infrastructure is just the start. I'm documenting every mistake, fix, and lesson learned as I build PhantomByte.

Subscribe to receive updates when we publish new content. No spam, just real lessons from the trenches.