I spent five years watching the AI industry build an entire civilization on top of a technical limitation. Vector databases. Chunking strategies. Hybrid search. RAG pipelines. Agentic decomposition. The entire retrieval-augmented generation stack exists for exactly one reason: transformer attention scales quadratically, so models can't hold enough context.

A Miami startup called Subquadratic just deleted the limitation.

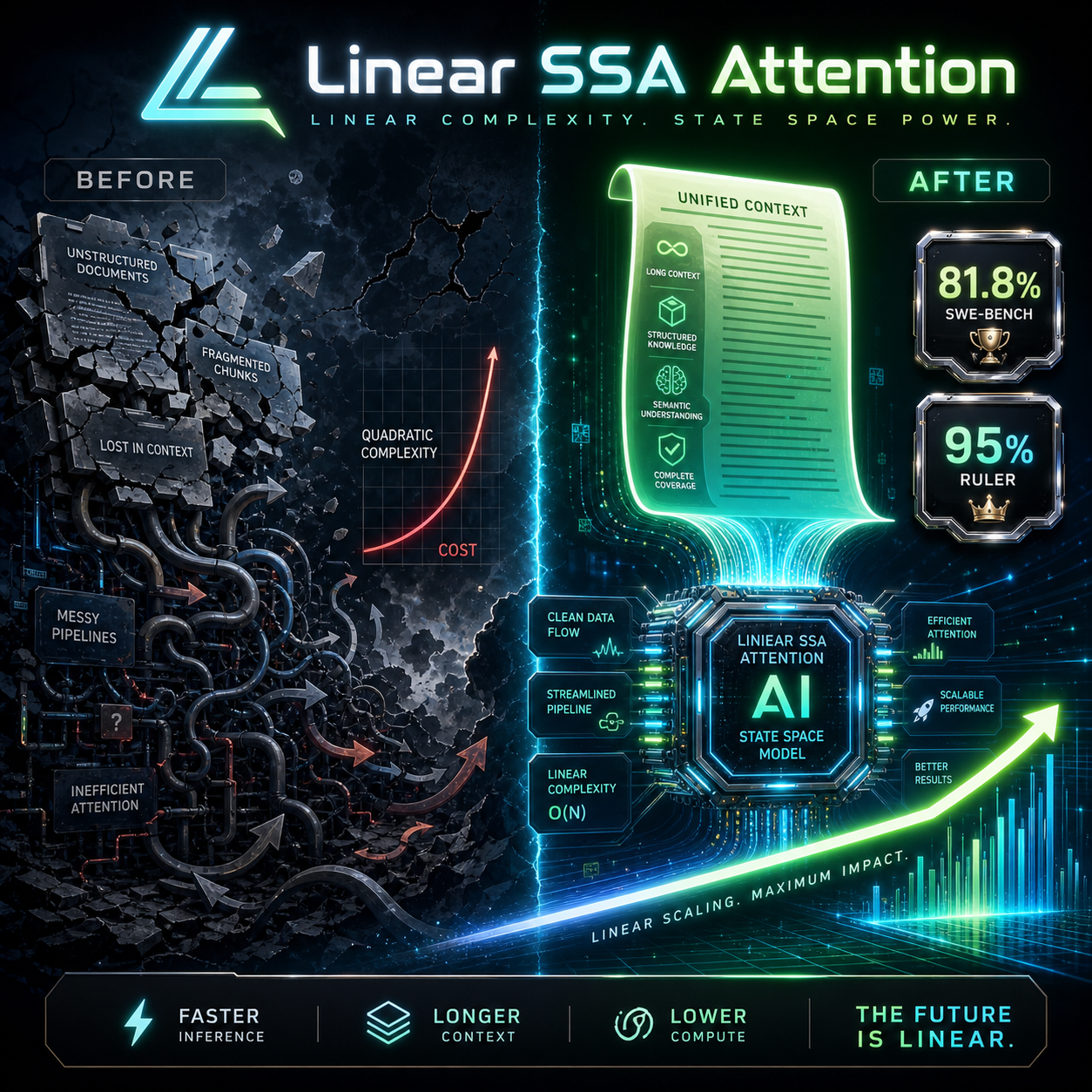

Eleven PhD researchers from Meta, Google, Oxford, Cambridge, and BYU rewrote the attention mechanism from scratch. Their model, SubQ, handles 12 million tokens in a single context window using something called Subquadratic Selective Attention (SSA). It scales linearly instead of quadratically. At 1 million tokens, it runs 52 times faster than dense attention.

This is not an incremental benchmark win. This is the architectural equivalent of switching from a combustion engine to an electric motor while everyone else is optimizing fuel injectors.

The benchmarks are not subtle. SubQ scores 81.8% on SWE-Bench Verified, edging out Claude Opus 4.6 at 80.8% and Gemini 3.1 Pro at 80.6%. On RULER at 128K tokens, a brutal long-context accuracy test across 13 dimensions, it hits 95.0%. It delivers 150 tokens per second at one-fifth the cost of comparable frontier models.

The company calls it the "first model built for long-context tasks." That is underselling it. This is the first model that makes you question why you built all that surrounding infrastructure in the first place.

Let me explain what actually dies here.

The RAG Industrial Complex

The retrieval-augmented generation market is worth billions. Pinecone, Weaviate, Chroma, Qdrant, Milvus. LangChain and LlamaIndex built their entire value proposition on connecting models to external knowledge because models could not hold enough context internally. Chunking strategies became an art form. How do you split a 500-page legal document so the model can find the relevant paragraph without losing surrounding context? Entire careers were built on answering that question.

RAG was never a feature. It was a workaround. A very expensive, very complex workaround for the fact that transformer attention scales quadratically.

If SSA delivers at scale, every assumption that stack is built on evaporates. You do not need to chunk documents when the model can hold the entire document. You do not need vector similarity search when the model can process all candidate passages directly. You do not need to orchestrate retrieval-and-generate loops when generation alone covers the context.

The industry spent 2017 through 2026 building scaffolding around a limitation. Subquadratic removed the limitation. The scaffolding does not disappear overnight, but its economic reason for existing just got hollowed out.

And the cost curves make this inevitable. SubQ runs at one-fifth the cost of leading LLMs. A RAG pipeline is not free. You pay for embeddings, you pay for vector storage, you pay for retrieval compute, you pay for the generation model on top. If the generation model alone handles the entire context at 20% of the cost, the bundled RAG solution loses on price, latency, and complexity simultaneously.

That is a death sentence in infrastructure economics.

The "Way Smaller" Detail Nobody Is Talking About

Subquadratic's CTO told The New Stack their model is "way smaller" than what the big labs run. They are not claiming to have built a bigger model than GPT-5.5 or Claude Opus 4.7. They are claiming to have built a smarter architecture.

This matters because it inverts the industry's core assumption: that better AI requires more compute. The trillion-dollar data center buildout, the 9-gigawatt hyperscale projects, the nuclear reactor deals, the entire infrastructure arms race. All of it assumes that frontier performance scales with parameter count, which scales with compute, which scales with capital.

Subquadratic just demonstrated that architectural efficiency can leapfrog scale. An 11-person team in a Florida office park out-engineered the $100-billion labs on the metric that actually matters: useful context per dollar.

This is the same pattern that killed the mainframe. The industry spent decades assuming bigger machines were the only path forward, until smaller, smarter architectures made them irrelevant. History does not repeat, but it does route around expensive assumptions.

What 50M Tokens Actually Means

The company says a 50-million-token window is coming soon. Here is what fits in 50 million tokens:

- Every line of code in the Linux kernel, plus the entire Git history, plus every mailing list discussion about every patch. In one prompt.

- Every medical record, lab result, and clinical note for a patient with a 20-year treatment history. In one prompt.

- A law firm's entire active case load, every contract, every piece of correspondence. In one prompt.

- Every commit, PR, issue, and Slack thread your engineering team produced in the last five years. In one prompt.

These are not hypotheticals. These are the use cases that enterprise AI has been circling for years but could never reach because the context windows were too small and the workarounds were too fragile.

At 50M tokens with linear attention scaling, entire categories of enterprise software become thin wrappers around a single model call. The legal document review industry. The codebase analysis industry. The medical records summarization industry. All of them are currently built on chunk-and-retrieve pipelines that exist because of a limitation that Subquadratic claims to have solved.

The Skeptical Case

I should be clear about what we do not know yet. SubQ's MRCR v2 score at 1M tokens is 65.9%. That is the hardest long-context retrieval benchmark in existence, and 65.9% is good but not dominant. GPT-5.5 scores 74.0% on the same test. Claude Opus 4.6 scores 78.3%.

The architecture might scale linearly, but retrieval accuracy at extreme contexts is its own problem. Having 12M tokens available does not mean the model finds the right information in those 12M tokens every time. Attention scaling is necessary for long-context reasoning, but it is not sufficient.

The technical report is not public yet. The benchmarks on their website are third-party validated, which is a good sign, but a startup's marketing page is not the same as peer-reviewed architecture research. The industry has seen this movie before: impressive website, bold claims, paper "coming soon." Most of those movies end with the paper never arriving.

I am not betting against 11 PhDs from Meta, Google, Oxford, Cambridge, and BYU who left their jobs to build this thing in Miami. But I am not deploying production workloads on their API tomorrow either. The right stance is paying attention, not placing bets.

The Realignment

Here is what I actually think happens. Linear attention architectures do not kill RAG overnight. The vector database market has too much installed capital, too many enterprise contracts, and too much organizational inertia to evaporate. What changes is the strategic calculus for new projects.

If you are building a new AI application in late 2026 or 2027, and SubQ or a competitor offers 12M-plus token windows at linear cost, do you build a RAG pipeline? Or do you just send the entire context to the model?

The answer depends on your scale, but the fact that it is even a question is the disruption. RAG goes from "default architecture for anything with documents" to "optional optimization for specific cost tiers." That is a massive contraction in addressable market.

The bigger story is what this does to the infrastructure race. Every hyperscaler is betting on the assumption that bigger models need bigger data centers. Microsoft just took operational control of OpenAI's Stargate project, a trillion-dollar centralized play. Nvidia committed $40 billion to equity deals in the first months of 2026 alone.

Architectural efficiency breakthroughs are kryptonite to centralized infrastructure bets. If a team of 11 can leapfrog frontier performance through smarter attention math, the trillion-dollar data center thesis has a crack in it. Not a fatal one yet. But a crack.

The PhantomByte take: the context window is the new frontier, and Subquadratic just claimed it. RAG, vector databases, and the entire retrieval stack are not obsolete today, but their expiration date just got stamped. Build accordingly.

Get More Articles Like This

Linear attention architectures are changing the infrastructure calculus. I'm tracking every shift as the context window arms race accelerates.

Subscribe to receive updates when we publish new content. No spam, just real analysis from the trenches.