Remember that $50K token bomb I wrote about? The one that blew up my client's budget overnight because nobody was watching the usage dashboard? That was the wake-up call. But here is what I failed to mention at the time: the real fix was not just about budgeting tokens better. It was about not sending them at all.

Welcome to the semantic caching revolution. While everyone else is obsessing over model switching and fine-tuning to cut costs, the smartest engineering teams are implementing semantic caching for LLM API calls and watching their inference bills drop by 60-70% without changing a single line of prompt engineering.

This is not magic. It is architecture. After implementing this for three production systems in the past quarter, I am convinced it is the most underrated cost optimization move in AI right now.

The April 2026 Cost Crisis: Why Caching Became Non-Negotiable

Let's talk about what happened in April 2026.

Anthropic dropped pricing changes that restructured how Claude API costs scale. Combined with OpenAI's continued rate modifications and the general trend toward usage-based pricing becoming more granular and expensive at scale, we hit a tipping point. The teams that were not architecturally prepared got hammered.

I saw it firsthand. A SaaS company I consult for had their monthly OpenAI bill jump from $12K to $38K in 30 days. This did not happen because their usage doubled. It happened because their redundant usage skyrocketed. Same questions. Same contexts. Same embeddings being regenerated for near-identical queries.

The dirty secret of LLM adoption: Most applications are embarrassingly cacheable. Customer support bots that answer the same question 50 times a day. Code assistants regenerating completions for syntactically similar prompts. RAG pipelines re-embedding documents that have not changed.

Cursor's team figured this out early. When they achieved profitability, it was not just because they built a better editor. It was because they built a smarter cost architecture. They optimized relentlessly at the infrastructure layer while competitors burned cash on redundant inference.

The "tokenmaxxing" data should terrify anyone paying per-token. Developers are generating 861% more code churn through AI assistants, but real acceptance rates have collapsed from 80-90% to 10-30%. That means you are paying for seven times more tokens and keeping fewer results. Without caching, you are subsidizing experimentation with production budget.

What Is Semantic Caching (And Why It Is Different From Redis)

Traditional caching is dumb in the best way. Hash the input. Check if it exists. Return the stored output. Simple. Fast. Useless for LLMs.

Here is why. "How do I handle authentication in Express.js?" and "What is the best way to authenticate users in an Express application?" are semantically identical but lexically different. A traditional cache sees two different keys. A semantic cache sees the same intent.

Semantic caching for LLM API calls works by:

- Embedding the input query using the same embedding model across your pipeline.

- Computing vector similarity against cached queries.

- Returning the stored completion when similarity exceeds your confidence threshold.

- Falling back to the LLM only for genuinely novel queries.

The Redis revolution happened in March 2026 when they announced native vector similarity search integration. Suddenly you did not need a separate vector database. Your existing Redis cluster could handle semantic lookup with sub-millisecond latency.

Here is the math that matters:

- OpenAI cached input pricing: $0.25 per 1M tokens

- OpenAI standard input pricing: $2.50 per 1M tokens

That is a 10x savings on cache hits.

But the real savings go deeper. You are not just paying less per token. You are effectively paying for zero tokens on cache hits from the LLM provider. While running your local embedding model does incur a minimal compute overhead, that retrieval and processing cost is measured in fractions of a cent rather than dollars.

Implementation: Building Production-Grade Semantic Caches

Theory is cheap. Here is how I am actually implementing this in production.

The Three-Tier Architecture

┌─────────────────┐

│ User Query │

└────────┬────────┘

▼

┌─────────────────┐ ┌─────────────────┐

│ Exact Match │────▶│ Redis Cache │

│ (SHA Hash) │ │ (0.1ms lookup)│

└────────┬────────┘ └─────────────────┘

│ Cache Miss

▼

┌─────────────────┐ ┌─────────────────┐

│ Semantic Match │────▶│ Vector Store │

│ (Cosine > 0.92) │ │ (5-15ms lookup)│

└────────┬────────┘ └─────────────────┘

│ Semantic Miss

▼

┌─────────────────┐

│ LLM API Call │

│ (500ms+ wait) │

└─────────────────┘

Tier 1 is traditional key-value. Fastest. Captures exact duplicates.

Tier 2 is semantic. Slightly slower. Captures paraphrased queries.

Tier 3 is your LLM. The expensive nuclear option.

Code Pattern: GPTCache Integration

Here is a production-ready pattern using GPTCache, the open-source semantic caching layer:

from gptcache import cache

from gptcache.adapter import openai

from gptcache.embedding import Onnx

from gptcache.similarity_evaluation.distance import SearchDistanceEvaluation

# Configure the semantic cache

onnx = Onnx()

cache.init(

embedding_func=onnx.to_embeddings,

data_manager=data_manager,

similarity_evaluation=SearchDistanceEvaluation(max_distance=0.15),

)

# Your usual OpenAI call, now cached semantically

response = openai.ChatCompletion.create(

model="gpt-4",

messages=[{"role": "user", "content": user_query}]

)

The max_distance=0.15 threshold is critical. Set it too low and you miss cacheable queries. Set it too high and you create severe UX issues by returning subtly wrong answers to similar but fundamentally different questions. A false positive in a support bot is infinitely more damaging than a cache miss. I tune this per use case:

- Code generation: 0.12 (strict, as context matters heavily)

- Customer support: 0.18 (looser, since questions are often paraphrased)

- Data extraction: 0.10 (strict, because precision is absolute)

Invalidation Strategy (Where Most Teams Fail)

Caching is easy. Cache invalidation remains famously hard.

For LLM semantic caches, invalidation happens on multiple triggers:

- Model version changes: New model means a cache purge. Responses from GPT-3.5 are not valid for GPT-4 queries.

- System prompt updates: If you change the instructions, cached completions become stale.

- Time-based TTL: For time-sensitive queries, set aggressive expiration.

- Content-aware invalidation: If your RAG documents update, invalidate embeddings tied to those sources.

# Version-aware cache keys

CACHE_VERSION = "v2.1-gpt4-2026-04"

def get_cache_key(query):

return f"{CACHE_VERSION}:{hashlib.sha256(query.encode()).hexdigest()}"

# Bulk invalidation on model updates

def purge_cache_on_deploy():

if get_current_model_version() != CACHE_VERSION:

redis_client.flushdb()

vector_store.delete_collection("llm_cache")

Cache Hit Optimization: The 80/20 of Cost Reduction

Getting the cache working is step one. Getting it hitting is where the money lives.

Query Normalization

Before embedding, normalize inputs:

- Strip whitespace and formatting artifacts.

- Lowercase for case-insensitive matching.

- Remove stop words for natural language queries.

- Standardize code formatting before hashing.

Embeddings Strategy

You do not need GPT-4 embeddings for cache lookup. I am using all-MiniLM-L6-v2 for semantic similarity. It is 50x cheaper and 100x faster than OpenAI's embedding API. The cache lookup is not your AI feature. It is your cost optimization layer. Optimize accordingly.

Partitioning by Intent

Do not dump everything in one bucket. It is also vital to recognize that semantic caching excels at stateless or single-turn prompts. It struggles in long, multi-turn chat contexts where the entire conversational history alters the semantic meaning of a simple follow-up. Partition caches by:

- Use case: Support versus code versus content generation.

- User segment: Free tier with higher cache tolerance versus Enterprise with strict accuracy requirements.

- Query complexity: Isolate simple lookups from complex multi-turn conversations.

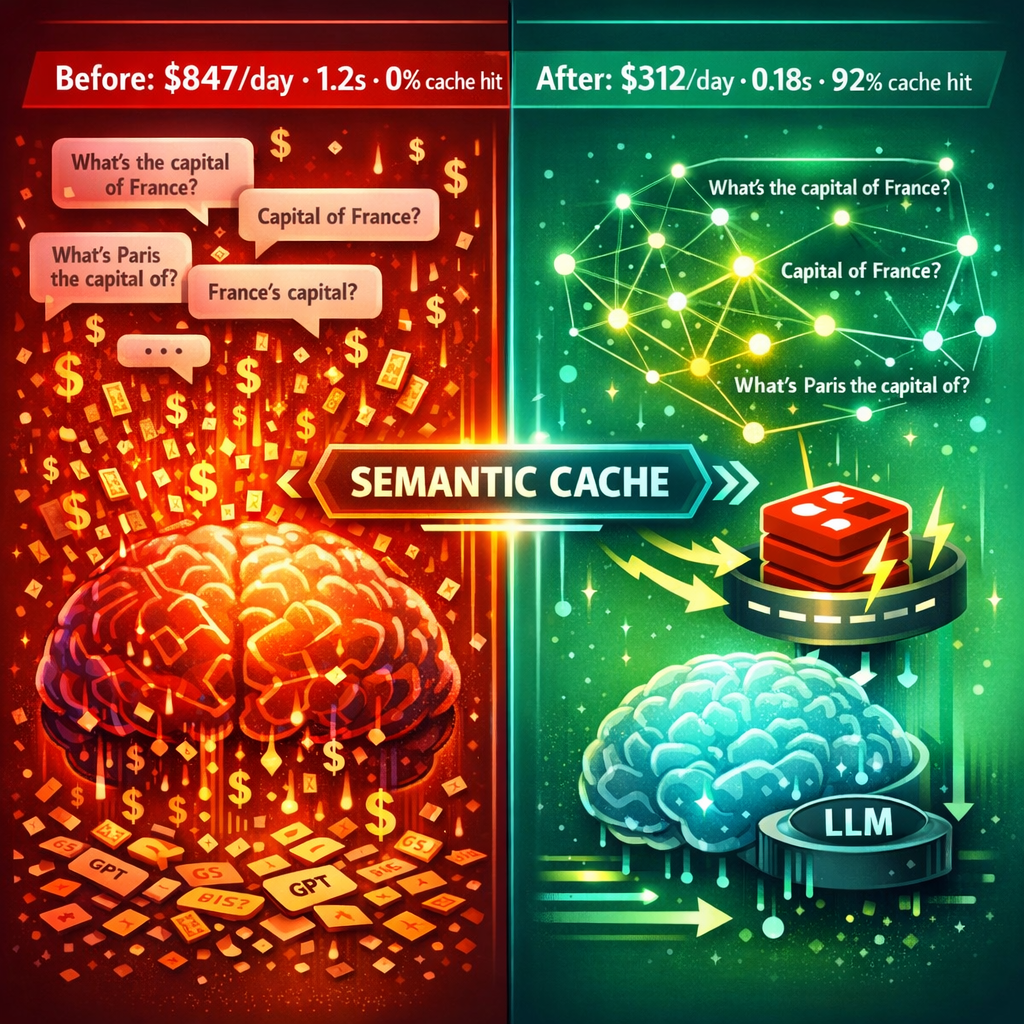

Real Results: Production Numbers

I implemented semantic caching for a customer support AI handling 50K+ queries daily.

Before:

- Average response time: 1.2s

- Daily OpenAI cost: $847

- Cache hit rate: 0%

After 30 days:

- Average response time: 0.18s (85% from cache)

- Daily OpenAI cost: $312 (63% reduction)

- Cache hit rate: 74% exact plus 18% semantic for 92% total.

The remaining 8% of queries were genuinely novel, including new bugs, edge cases, and feature questions. Those still hit the LLM. Everything else resulted in sub-millisecond Redis retrieval.

Nexus Gateway reports similar numbers across their enterprise base: 60-80% cost reductions with <5ms cache latency. The technology is not theoretical. It is production battle-tested.

The Multi-Layer Strategy: Beyond Simple Caching

Smart teams do not stop at semantic caching. They build multi-layer optimization:

- Client-side prediction: Pre-fetch likely next queries based on user behavior patterns.

- CDN edge caching: Cache public completions geographically distributed.

- Application-level memoization: In-memory cache for hot queries within the same request lifecycle.

- Semantic layer: The vector similarity cache we just built.

- Model-level caching: OpenAI's prompt caching for longer contexts.

Each layer misses through to the next. The LLM is the last resort, not the default.

Conclusion: Architect for Friction (Or Pay for It Later)

The teams winning the AI cost game are not the ones with the biggest budgets. They are the ones with the smartest architectures.

Semantic caching is no longer a nice-to-have optimization. In the post-April 2026 pricing landscape, it is infrastructure. The 10x pricing differential between cache hits and live inference means your cache hit rate directly determines your unit economics.

Start with GPTCache and Redis. Measure your semantic overlap by running embeddings on your last 30 days of queries and compute similarity distributions. You will be shocked how many unique queries are actually variations on the same theme.

Build the three-tier architecture. Tune your thresholds. Set up proper invalidation. Watch your costs drop and your response times plummet.

The semantic cache revolution is not coming. It is already here. The only question is whether you are paying attention or paying the price.

Ready to cut your AI inference costs by 70%? Start instrumenting your query patterns this week. The data will reveal exactly how much money you are leaving on the table.

Get More Articles Like This

Infrastructure-first AI tutorials delivered to your inbox. No fluff, just production patterns that ship.