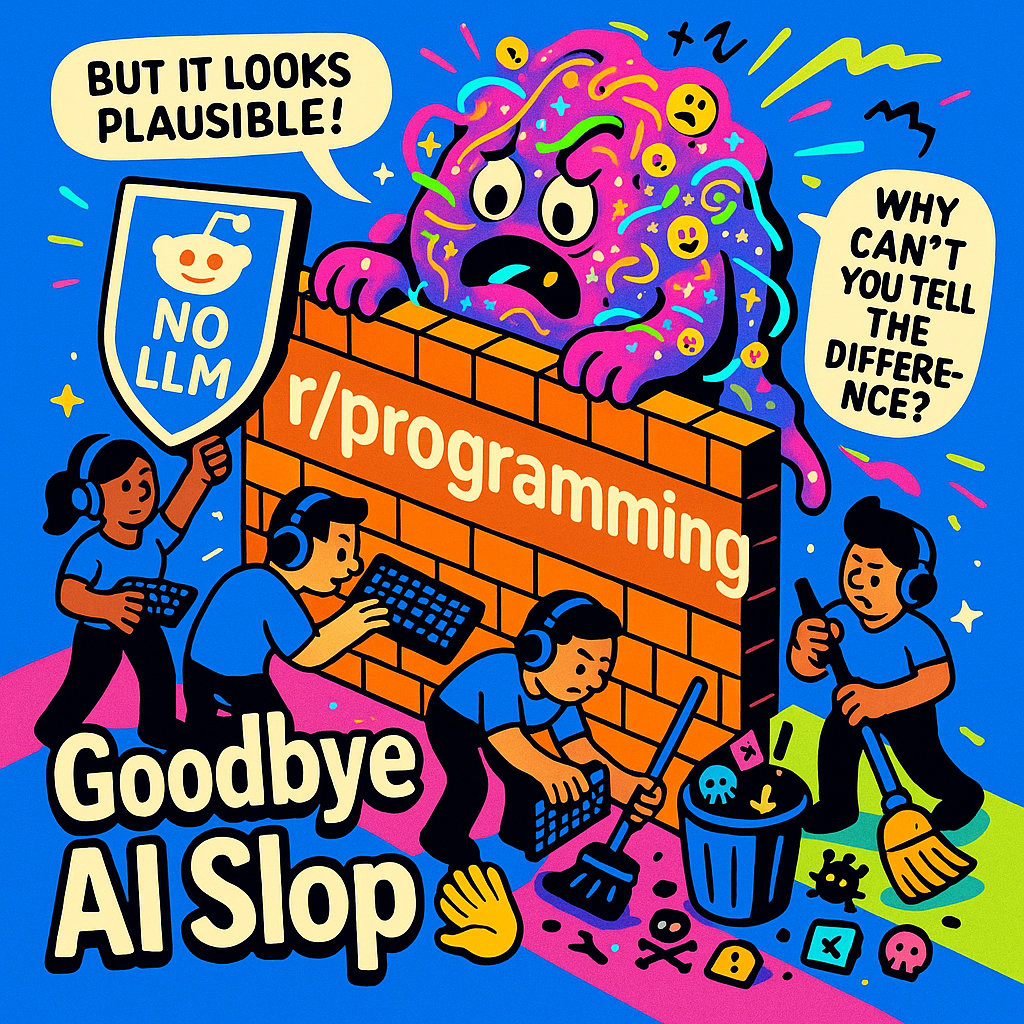

Two years ago, Stack Overflow tried to ban ChatGPT-generated answers and failed. Yesterday, r/programming succeeded, and in doing so, revealed something troubling about what happened to developer communities in 2025.

"I spent five years bleeding for this and you can't tell the difference between me and a chatbot?"

That comment from a developer on Hacker News captures something raw that I have been sensing across technical communities for months. It is not just fatigue with low-quality AI content. It is an identity crisis. And r/programming's decision to ban all LLM-generated content marks a turning point we have been heading toward since the AI boom began.

What Just Happened: The r/Programming Ban Explained

On April 3, 2026, moderators of r/programming, one of the largest programming communities on the internet with over 5 million members, announced a temporary ban on all LLM-generated content. The announcement thread on Hacker News drew 190 points and 209 comments. The sentiment was "strongly supportive" according to community observers.

The ban is explicit: posts generated by large language models are no longer welcome. Moderators are actively removing content that violates this policy. This isn't a suggestion or a guideline; it is enforcement.

What makes this significant isn't just the size of the community. It is the precedent. When the largest programming forum on Reddit decides AI-generated content has crossed a line, the rest of the developer ecosystem takes notice.

This Is Not the First Time, and That Is the Problem

To understand why this ban matters now, you need the timeline:

- December 2022: Stack Overflow bans ChatGPT-generated answers, citing quality concerns and the difficulty of verifying AI-written code.

- June to August 2023: Stack Overflow moderators go on strike. The company had secretly partnered with OpenAI to feed user content into ChatGPT training while telling moderators to stop deleting AI-generated answers. The message from moderators was clear: "If I can't delete GPT's plausible-looking spew, what's the point?"

- 2023: r/programming deals with "astroturfing" incidents. These were coordinated campaigns of AI-generated content designed to promote products or agendas.

- April 2026: r/programming implements its temporary LLM ban.

See the pattern? Each escalation failed to solve the underlying problem. Stack Overflow's ban collapsed under corporate interests. Moderator trust was destroyed. And the AI content kept coming.

But r/programming's approach is different. They are not asking. They are excluding. Active removal beats unenforceable policy when the stakes are community integrity.

The Trust Collapse: By the Numbers

The 2025 Stack Overflow Developer Survey published last May tells part of the story. Trust in AI tools dropped from over 70% to just 60%. Only 29% of developers now say they trust AI-generated output.

But the real damage shows in the community metrics. According to the site's public community metrics dashboard, Stack Overflow's question volume is down 78% from its December 2025 baseline. Compared to the 2014 peak, we are looking at 87% fewer questions. The platform that defined programming Q&A for a generation is bleeding out.

Why? Because "Contribution volume is up; signal-to-noise is down. Maintainers can no longer assume a PR represents genuine investment."

That observation from a Hacker News commenter cuts to the heart of it. When AI can generate plausible-looking code, documentation, and discussion at scale, the cost of participation drops to zero, and so does the value of participation. Communities built on earned expertise collapse when expertise becomes indistinguishable from generation.

You have probably seen the term "AI slop" circulating. It started as a dismissive label for low-quality AI-generated content. Now it is a recognized category of digital pollution. Computer Weekly called 2025 the beginning of a "grassroots backlash era." The phrase "Your AI slop bores me" went viral as a rallying cry for developers tired of wading through machine-generated mediocrity to find authentic human insight.

Why Now? The Saturation Tipping Point

Here is the critical insight from community analysis: twelve months ago was the experimental phase. Now we are in the exclusion phase.

The difference is saturation. In 2024, AI-generated content in programming communities was still mostly recognizable. The tells were obvious: overly formal language, hallucinated APIs, and confident assertions about non-existent features. Experienced developers could spot it, filter it, and move on.

In 2025, the models got better. The line between "competent human developer" and "competent AI assistant" blurred. This happened not because AI achieved genuine understanding, but because it achieved genuine-sounding output.

This creates a trust collapse that no amount of tooling can fix. When you cannot tell if a code review, a bug report, or a tutorial came from someone who spent years mastering their craft or from a model trained on the output of people who spent years mastering their craft, the concept of community expertise collapses.

"Vibe coding", the practice of generating code through AI prompts without understanding how it works, accelerated this trend. Developers who could not explain their own pull requests started flooding repositories with AI-generated submissions. Maintainers faced a choice: accept plausible-looking code they could not verify, or reject contributions they could not distinguish from genuine effort.

The r/programming ban is a response to this saturation. When the volume of AI content crosses a threshold, communities must choose between drowning in slop or building walls. r/programming chose walls.

The Identity Crisis at the Heart of It

Return to that Hacker News comment: "I spent five years bleeding for this and you can't tell the difference between me and a chatbot?"

This isn't about technology. It is about identity. Programming communities have always been meritocracies in principle. They are places where your code speaks for you, where knowledge earned through effort carries weight, and where expertise has value because it is scarce.

AI removes that scarcity. It does this not by making everyone knowledgeable, but by making knowledge indistinguishable from its simulation.

For senior developers, this creates a kind of existential anxiety. The skills they spent years developing, their deep understanding of systems, edge cases, and performance implications, can now be approximated by tools available to anyone with a credit card. The approximation isn't perfect, but it is good enough to blur lines that once seemed clear.

The r/programming ban isn't anti-AI. It is pro-community. It asserts that there is value in human-generated technical discussion that goes beyond the information content of the words. That value includes context, experience, accountability, and the intangible signal that someone who has wrestled with real problems is sharing what they learned.

What Is Next: The Fragmentation of Tech Communities

The Hacker News consensus suggests r/programming won't be the last. "Personally can't wait for no-AI communities to proliferate," one commenter wrote. That sentiment of resignation mixed with hope echoes across developer forums.

But here is the complication: outright bans might not scale. GitHub's approach offers an alternative. They are experimenting with "Good Egg," a trust-scoring system for contributors that attempts to verify human identity and contribution patterns. Rather than banning AI content entirely, they are trying to build infrastructure that elevates verified human contributions.

This creates a deeply fractured reality for open-source maintainers who have to live in both ecosystems simultaneously. A maintainer might enforce a strict no-AI policy on their project's subreddit or Discord server while relying on automated verification scores to filter pull requests on GitHub. The cognitive load of switching between these different paradigms of trust is staggering.

Both approaches face the same fundamental problem: verification is hard. As AI-generated content becomes more sophisticated, the cost of distinguishing human from machine output rises. Communities must spend more resources on verification or accept higher rates of false negatives where legitimate human contributors are flagged as AI-generated.

The likely outcome isn't uniform acceptance or rejection of AI. It is fragmentation. "No-AI" communities will form alongside "AI-assisted" communities. The technical ecosystem will split based on preferences about authenticity, effort, and the value of human judgment.

Twelve months ago, we were in the experimental phase. Now we are in the exclusion phase. The question for 2026 isn't whether communities will restrict AI content. r/programming just answered that. The question is which restrictions will stick and what kind of technical culture we will build around the boundaries we draw.

Conclusion: A Line in the Sand

r/programming's ban isn't a policy decision. It is a line in the sand drawn by a community that reached its limit. The moderators aren't fighting progress. They are defending the conditions that make their community worth participating in.

The signal to noise problem in AI isn't getting solved by better models. If anything, better models make the problem worse by making noise harder to distinguish from signal. The solution communities are converging on isn't technical. It is social. Exclusion. Verification. Human judgment over automated scale.

For developers, this creates a choice about where to invest attention. The communities that thrive in the next phase of technical culture will be the ones that solve the trust problem. Some will do it through bans. Others will do it through verification tools. The specific mechanism matters less than the outcome: restoring conditions where expertise earned through effort carries more weight than expertise simulated through prompts.

r/programming's ban isn't the end of this story. It is the beginning of a necessary reckoning about what we value in technical communities and what we are willing to defend.

Get More Articles Like This

Getting your AI agent setup right is just the start. I'm documenting every mistake, fix, and lesson learned as I build PhantomByte.

Subscribe to receive updates when we publish new content. No spam, just real lessons from the trenches.