If you blinked this week, you missed it.

Over a chaotic 48-hour stretch, seven seismic events hit the AI industry simultaneously. Any single one of them would have been a headline on its own. Together, they tell a story most people are not paying attention to.

Here is what happened, rapid fire:

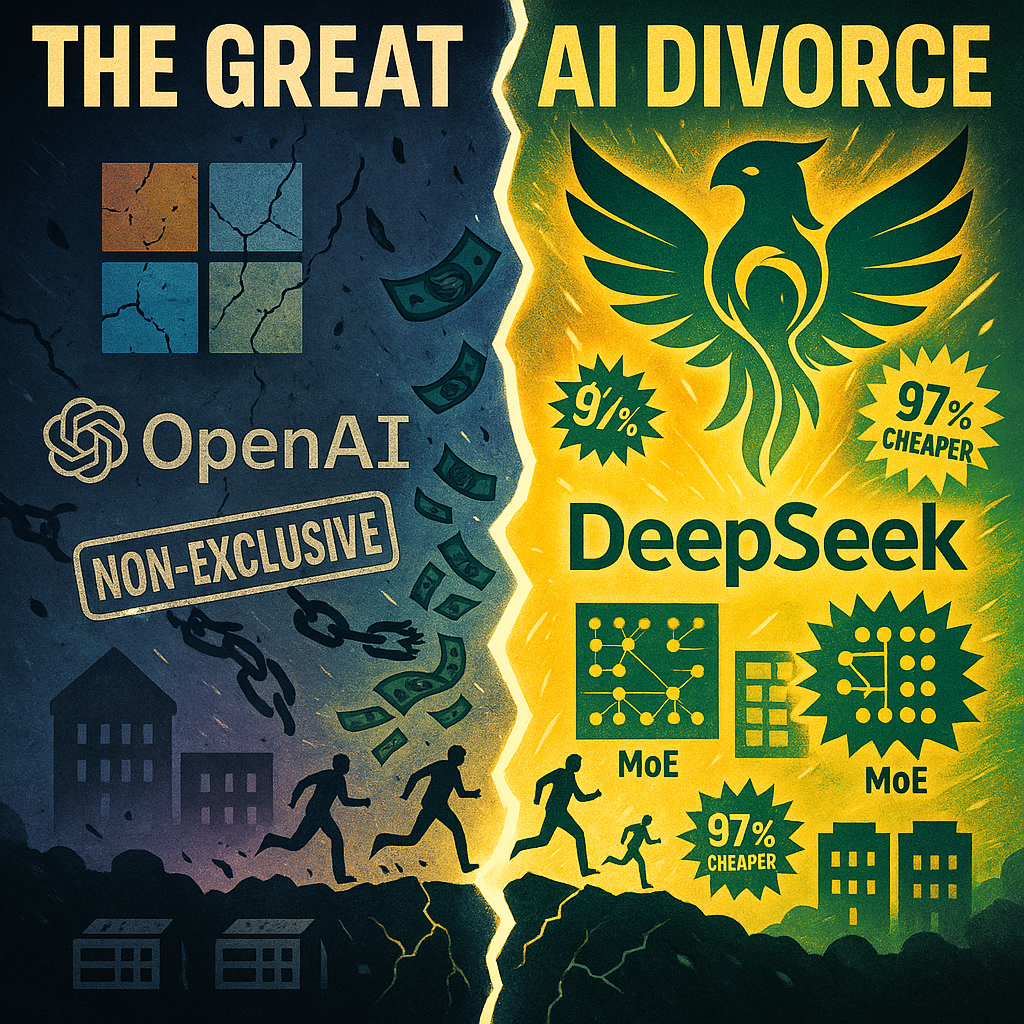

- Microsoft and OpenAI ended their exclusive partnership after seven years.

- Elon Musk and Sam Altman faced off in a $134 billion trial in Oakland federal court.

- Google signed a classified deal with the Pentagon for "any lawful government purpose."

- DeepSeek cut prices to 97% below GPT-5.5 permanently.

- The EU told Google to open Android to AI rivals or face penalties.

- Top talent fled Big Tech for startups, including a record $1.1 billion seed round.

- Rural America started defeating data centers at the local level.

The AI industry is not just changing. It is being restructured from every angle at once. And the common thread? The old model is broken. Let me walk you through it.

THE PARTNERSHIP THAT DIED

Microsoft and OpenAI spent seven years telling everyone they were ride or die. Turns out they were ride or until it got expensive. On April 27, Microsoft published a blog post announcing what it called "the next phase" of the partnership. What it actually announced was the end of exclusivity. Here is what changed:

First, OpenAI can now run on any cloud provider. Amazon, Google, whoever. Not just Azure. Microsoft remains the "primary cloud partner" and OpenAI products "ship first on Azure" unless Microsoft "cannot and chooses not to support the necessary capabilities." Read that fine print twice. If Microsoft cannot or chooses not to support something, OpenAI walks. That is not a partnership. That is a hostage situation with an open window.

Second, Microsoft's license to OpenAI IP now runs through 2032, but it is non-exclusive. That word matters. Non-exclusive means Microsoft can be competed away. If OpenAI starts shipping better on Google Cloud or AWS, Microsoft's license is worth exactly nothing.

Third, the revenue share reversed. Microsoft no longer pays OpenAI. OpenAI now pays Microsoft, through 2030, with a total cap. For seven years, Microsoft was pouring money into OpenAI. Now OpenAI is writing checks to Microsoft. If that does not tell you the power dynamics flipped, nothing will.

Fourth, the AGI clause is dead. The "we will figure it out when we hit AGI" escape hatch that both companies wrote into the original deal? Gone. Shredded. The prenup got torn up.

I have been saying for months that the partnership model defining the AI boom is unsustainable. This is not a corporate restructuring. This is a divorce. And it happened six months after the last renegotiation. At this rate, they will have a new deal by Halloween.

While two billionaires argued over who owns the future, another set of billionaires walked into a courtroom 15 miles away.

THE $134 BILLION CIRCUS

This morning, Elon Musk took the witness stand in Oakland federal court. He is suing OpenAI and Sam Altman for an estimated $134 billion in damages, claiming they abandoned the nonprofit mission he helped fund.

The trial opened Monday with jury selection. Nine jurors were seated. According to court reports, Judge Rogers noted during voir dire that "the reality is people do not like him," referring to potential jurors' reactions to Musk. When your own judge has to acknowledge the jury hates you before opening arguments even start, you are not off to a strong start.

But here is the thing: whether the jury likes Musk or not, the case has real stakes. Musk is asking the court to force OpenAI back to a nonprofit structure. He wants Sam Altman and Greg Brockman removed. If he wins, the IPO everyone has been waiting for evaporates. The entire for-profit AI model gets put on trial.

The timing could not be worse for OpenAI. Reports indicate they are missing growth targets ahead of the planned IPO. Their biggest partner just publicly loosened the leash. And now their co-founder is in federal court calling them liars.

While the internet treats this as entertaining billionaire drama, the outcome of this case could determine whether OpenAI exists as a company in its current form a year from now. That affects everyone who uses their API.

While two billionaires fight over OpenAI's soul, another tech giant is quietly selling theirs.

GOOGLE'S CLASSIFIED WAR MACHINE

Google signed a classified AI deal with the Pentagon this week. The terms? "Any lawful government purpose." Six hundred employees signed a petition demanding the deal be blocked. Management did not blink.

Remember Project Maven in 2018? Google employees revolted. Thousands signed petitions. Dozens resigned. Google backed down and let the contract expire.

That Google is dead. Project Maven 2.0 is here, it is classified, and the internal resistance got steamrolled. AI firms are now training military-grade models on classified datasets. The military-industrial complex just swallowed another AI company whole, and this time there was barely a news cycle about it.

The shift is not subtle. It is a complete reversal of what these companies claimed to stand for.

THE DEEPSEEK BOMBSHELL

Let me make sure I have this straight. Anthropic is charging somewhere between $25 and $30 for Claude Opus access. GPT-5.5 is running $0.50 per million cached input tokens. Usage allotments are shrinking across the board. And Claude Opus 4.7 is reportedly worse than 4.6.

Meanwhile, DeepSeek just dropped V4-Pro at $0.0036 per million input tokens. That is 97% below GPT-5.5. V4-Flash runs about $0.24 per million output tokens and handles most coding tasks without breaking a sweat. These are not promotional prices. These are permanent.

Let me put this in perspective. If you are running a multi-agent swarm like mine, where agents are constantly reading, writing, and reasoning across thousands of tokens per task, the cost differential is not marginal. It is structural. You literally cannot compete on American models at American prices if your competitor is running DeepSeek.

But the pricing gap is only half the story.

Anthropic recently turned Claude's safety "harness" from High to Medium. Nobody caught it for weeks. The model performed like garbage during that period. Users noticed. They complained. Anthropic was silent.

What actually happened? They were cutting corners on compute while charging premium prices. The safety harness is not just a guardrail. It is a compute mechanism. Turn it down, save money, hope nobody notices. Somebody noticed.

This is the metaphor for the entire US AI industry right now. Charge premium prices. Deliver declining quality. Shrink the allotment. And hope the branding carries you through. DeepSeek is over there at three-tenths of a cent doing the same job better.

What exactly are you paying for?

THE EFFICIENCY PROBLEM

The architectural gap is even more alarming than the pricing gap. DeepSeek V4-Pro runs on a Mixture of Experts architecture: 1.6 trillion total parameters, but only about 49 billion active per inference. It is dramatically lighter than competitors without sacrificing output quality. In many benchmarks, it is actually better.

The US AI philosophy has been "scale first, efficiency later." Build bigger models. Throw more GPUs at the problem. If it costs more, just raise prices. If it uses more energy, just build more data centers. That philosophy is getting exposed.

Let me be fair here. Data centers are not dumb. They are inevitable. As AI adoption grows, more users means more compute, period. You need both scale and efficiency. Google understands this. Their TPU architecture and optimization work proves it is possible to do both.

Anthropic and OpenAI do not seem to get it. Their approach has been to paper over architectural inefficiency with "we will build more data centers." That math stops working when a competitor shows up doing the same work for 3% of the cost.

The US approach: build bigger, spend more, figure out efficiency later. DeepSeek's approach: build smarter, spend less, make efficiency the foundation.

One of these philosophies is winning. It is not the one spending billions on GPU clusters.

THE TALENT EXODUS

While the big labs fight over models and money, the people who actually build the stuff are walking out the door. Dealroom data shows $18.8 billion has flowed into AI startups founded since early 2025. David Silver, the creator of AlphaGo, just raised a $1.1 billion seed round for Ineffable Intelligence before he wrote a single line of code. Yann LeCun left Meta for AMI Labs at a $1 billion valuation.

Ex-DeepMind, OpenAI, Anthropic, and xAI researchers are all raising hundreds of millions. Big Tech has become a feeder school for AI startups. Seven years of talent acquisition, reversed in eighteen months.

When the guy who built AlphaGo walks out with $1.1 billion before he has written a product, you know the talent market is broken. Big Tech spent years telling everyone they were the only game in town. Turns out they were just training their competitors at their own expense.

THE BACKLASH NOBODY IS COVERING

It is not just talent turning against Big Tech. It is the communities they are building in.

Across rural America, communities are organizing against data center construction. Illinois farmers defeated a proposed data center over water rights concerns. The opposition is crossing party lines. This is not a left or right issue. It is a "you are draining our aquifer to run chatbots" issue.

The anti-data center movement is fighting at the local level: city councils, zoning boards, water rights commissions. These are not places where tech lobbying budgets work. You cannot send a DC lobbyist to a town hall in rural Indiana and expect results.

And from across the Atlantic, the European Commission told Google to open Android to AI rivals. Gemini's built-in advantage on Android devices could end by July 2026. Google called it an "unwarranted intervention." The EU does not seem to care. Final decision expected by end of July.

When Illinois corn farmers and EU bureaucrats are on the same side of an issue, you have managed to unite people who agree on nothing. Against you. The AI industry's biggest opposition is not coming from competitors. It is coming from communities that do not want the lights to flicker every time someone generates a blog post.

WHAT COMES NEXT

Seven stories. Forty-eight hours. One industry turned inside out.

The partnership model is dead. The nonprofit mission is on trial. The pricing model is collapsing. The architectural philosophy is outdated. The talent is leaving. The public is pushing back. The regulators are circling.

The question is not whether AI survives this. It is which version of AI comes out the other side. The companies that figure out how to be efficient, affordable, and actually good at what they do will be fine.

The ones still charging $30 for a model that is getting worse? They have a problem that not even a data center can solve.

To survive this restructuring, developers and businesses need a fundamental pivot. When you depend on a single provider's pricing model, political decisions, and architectural choices, you are not building a business. You are building a dependency. The events of the past 48 hours prove that dependencies are liabilities. The only real path forward is to own your infrastructure, control your models, and route your own logic.

Here at PhantomByte, I have been building sovereign AI infrastructure precisely because I saw this coming.

The AI industry just hit a wall. The interesting part is what happens when it figures out how to climb over it.

Get More Articles Like This

The AI industry is restructuring in real time. I'm documenting every shift, every breakdown, and every opportunity as it happens.

Subscribe to receive updates when we publish new content. No spam, just real analysis from the trenches.