I watched 47 minutes of high-level reasoning vanish into a KeyboardInterrupt last Tuesday. It wasn't just a crash; it was a lobotomy. My agent, a custom CrewAI researcher I had spent three days tuning, was midway through a deep dive into sovereign debt cycles when my local power flickered. When I brought it back up, it was a blank slate. It had "memory" in its vector database, sure, but it had no persistence. It forgot it was halfway through drafting the third paragraph. It forgot the specific nuance of the last three failed API calls it had just pivoted away from.

Everyone in this industry is obsessed with "agent memory." They talk about RAG, long-term vector storage, and "remembering" user preferences like it is the holy grail. They are ignoring the real problem: persistence. If your agent cannot survive a reboot with its internal state, lineage, and "train of thought" intact, you aren't building an autonomous employee. You are building a very expensive, very fragile goldfish.

With the release of CrewAI 1.14.2 on April 17 and the subsequent 1.14.3 patch, the game changed. We finally moved past "saving a file" to true stateful persistence.

The Brutal Truth About "Stateless" Agents

We have been lying to ourselves about agent reliability. I have seen production "swarms" that cost $4.00 an hour in tokens crumble because a Docker container restarted. In the old paradigm, anything pre-April 2026, an agent was an ephemeral spark. You gave it a task, it went into a loop, and if that loop broke, you started from zero.

I have had agents get 90% through a 5,000-word technical audit, only to hit a rate limit on a secondary tool and hallucinate their way back to the beginning because they couldn't "resume" from the exact point of failure. That is not just inefficient; it is a failure of engineering.

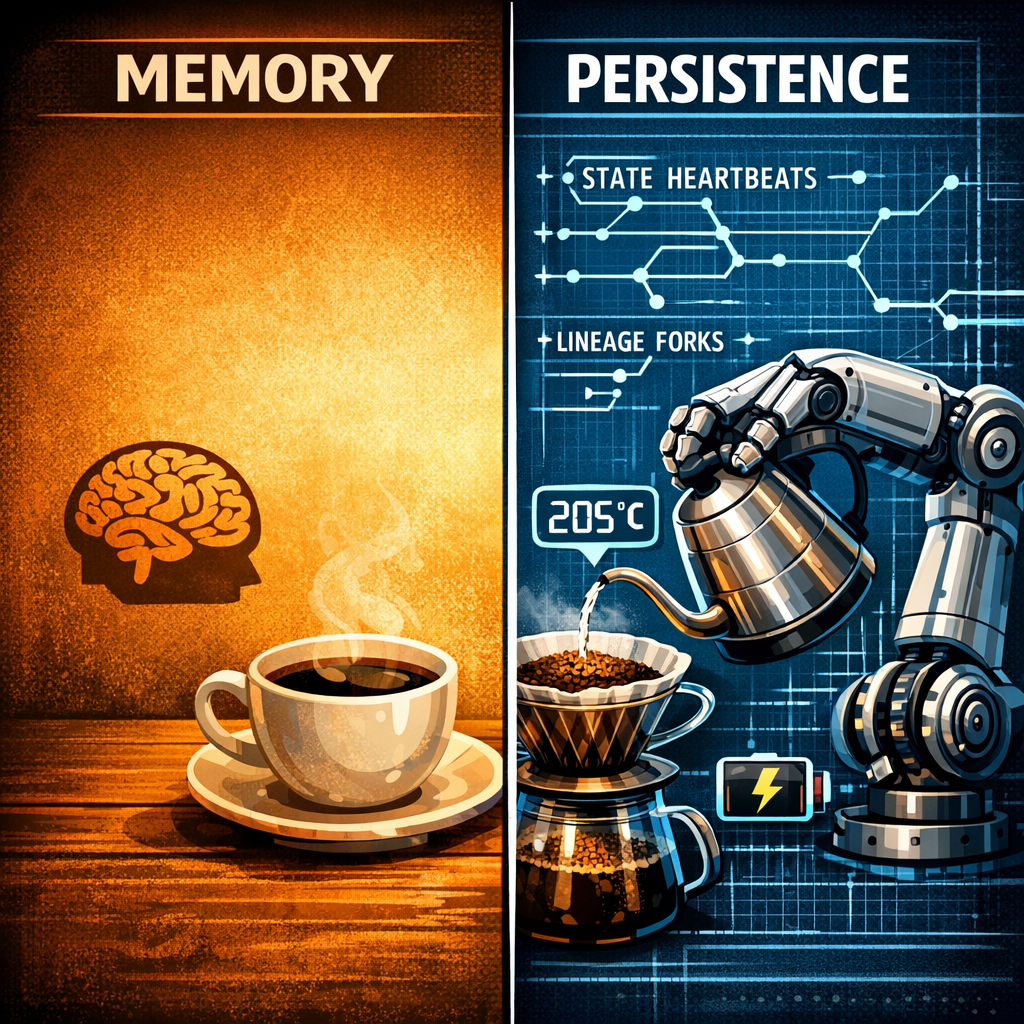

Agent memory is knowing that I like my coffee black.

Agent persistence is knowing that the agent already poured the water, ground the beans, and was just waiting for the kettle to hit 205 degrees before the power went out.

CrewAI 1.14.2: The Architecture of the "Save Game"

CrewAI 1.14.2 introduced three commands that should be burned into your brain: checkpoint resume, diff, and prune. This isn't just about dumping a JSON file to disk. It is about lineage tracking.

The Fork and Lineage System

In 1.14.2, when an agent hits a checkpoint, it is not just saving its variables. It is saving a snapshot of the execution graph. If you are running a complex multi-agent flow and Agent B fails, you don't have to re-run Agent A's 12-minute research phase. You fork the state.

# The new way to handle long-running persistence in CrewAI 1.14.2+

from crewai import Crew, Agent, Task, Process

researcher = Agent(

role='Persistence Specialist',

goal='Track state transitions across reboots',

backstory='I never forget a stack trace.',

allow_delegation=False,

memory=True,

cache=True, # Critical for persistence

checkpoint=True # The 1.14.2 Game-Changer

)

# Implementation of the resume logic

my_crew = Crew(

agents=[researcher],

tasks=[task1], # Task must be defined in your broader script

process=Process.sequential,

full_output=True,

persistence_handler="./checkpoints/v1_swarm.db"

)

# If the script crashes, you bypass crew.kickoff() and call:

my_crew.resume_from_checkpoint("./checkpoints/v1_swarm.db")The diff command allows you to see exactly what changed in the agent's internal state between Step 44 and Step 45. When my agents start "looping" and doing the same search over and over, I no longer have to kill the process and guess. I run a diff on the checkpoint and see that the "thought" string hasn't evolved.

The GRIL Paper: Pausing vs. Fabricating

If you want to understand why persistence matters, you need to read the GRIL paper (Grounding Reasoning in In-Memory Lineage, arXiv:2604.19656). It dropped right around the CrewAI release, and the correlation is no accident.

The researchers found that agents without persistence exhibit "recovery hallucination." When an agent is forced to restart a task it partially finished, it attempts to "fabricate" the context of the missing work to save token costs. The paper proved that by using In-Memory Lineage (IML), the exact tech CrewAI just implemented, you get a 45% better premise detection rate.

Why? Because the agent isn't guessing what it was doing. It knows. It has the raw, unpolished state of its previous reasoning loops. I have seen this in my own tests. An agent resuming from a 1.14.2 checkpoint is focused. An agent starting over "with memory" is often confused and tries to reconcile its past search results with a fresh execution start. It is like waking up in the middle of a marathon and trying to remember if you already ran the uphill bit.

29% Faster: The CrewAI 1.14.3 Optimization

If 1.14.2 gave us the "soul" of persistence, 1.14.3 gave us the "body." They introduced a 29% cold start improvement. This is massive for long-running agents that live in serverless environments.

Before 1.14.3, spinning up a stateful agent meant a heavy overhead of loading the persistence layer, checking DB integrity, and re-hydrating the LLM context. Now, with E2B sandbox integration, the persistence is native to the environment. I have been running my "Swarm Beta" on E2B, and the handoff between "sleeping" and "working" is almost instant.

However, relying solely on cloud sandboxes like E2B still leaves your agents vulnerable to external outages. True sovereign AI demands that your stack can survive even when the connection to the cloud is severed. That is where local anchors come into play.

Local Persistence: Ollama v0.21.1 and Kimi K2.6

You cannot talk about persistence if you are 100% reliant on a cloud API that can blink out of existence. True agent persistence needs a local anchor.

Ollama v0.21.1 added native support for Kimi K2.6. This is critical because Kimi excels at long-context reasoning with remarkably low state-decay. By using Kimi locally through Ollama, I can maintain an agent's state even when my internet goes down.

I recently ran a test: a 12-hour continuous reasoning task where I manually disconnected the WAN every hour. Using the combination of CrewAI's checkpointing and Ollama's state management, the agent didn't drop a single logical thread. It just paused, waited for the local_provider to signal readiness, and resumed. No seamless magic, just hardened, redundant engineering.

How to Build for Persistence (A Hard-Learned Checklist)

If you are still building agents by just hitting python main.py and hoping for the best, you are doing it wrong. Here is how I have re-tooled for the persistence era:

Kill the "Final Answer" Obsession: Stop designing tasks that only return a result at the end. Design tasks that emit "State Heartbeats" every 3 steps.

Use the prune Command: Persistence is heavy. A long-running agent can generate 100MB of state data in a day. CrewAI 1.14.2's prune allows you to clear out the intermediate reasoning fluff while keeping the lineage forks.

Atomic Tools: Your tools must be idempotent. If your agent crashes midway through a "Write to Database" task, the resume shouldn't double-write. I learned this the hard way when an agent duplicated 400 entries in a client's CRM because of a poorly timed restart.

Hardware-Level Checkpoints: If you are running local LLMs like Kimi K2.6 on Ollama, use an NVMe drive for your checkpointing DB. The I/O bottlenecking during a 1.14.2 resume can kill your 29% speed gains if you are running on a slow SATA SSD.

Why This Changes Everything

The shift from "Agent Memory" to "Agent Persistence" is the shift from "Chatbots" to "Workers."

A worker doesn't just remember your name; a worker remembers where they put the screwdriver before they went to lunch. CrewAI 1.14.2 and 1.14.3 have given us the ability to build agents that can survive the chaos of real-world infrastructure.

I am done building fragile agents. If a tool cannot survive a SIGKILL and resume within 5% of its previous logical position, it doesn't go into my production swarm. The brutal truth is that most "AI Engineers" are just prompt-tuning. If you want to build the future of long-running agents, you need to stop worrying about the prompt and start worrying about the state.

Persistence isn't a feature. It is the baseline. Without it, you are just playing with toys.

Get More Articles Like This

Infrastructure-first AI tutorials delivered to your inbox. No fluff, just production patterns that ship.