Meta just put an AI bot on Threads that users cannot block, hide, or restrict. Amazon employees are being rated on how much they use AI tools, so they are generating garbage to inflate their scores. Google embedded Gemini so deeply into Android that 3 billion users now have an AI operating system with no off switch. And Qualcomm's CEO just declared 2026 the year AI agents go mainstream, predicting smartphones will give way to AI native wearables you cannot buy without the AI baked in.

This is not organic adoption. This is a coordinated push to make AI unavoidable, as a consumer, as a worker, and as a citizen. The choice is being removed, and nobody asked you.

The Unblockable Bot: Meta's Consent Problem

On May 12, 2026, Meta announced it was testing a feature on Threads that lets users tag @MetaAI to get answers to questions or context on conversations. If you have spent any time on X, this sounds familiar. It is Meta's version of Grok, the xAI bot that Elon Musk welded onto his platform. But Threads users quickly discovered something that set this apart: you cannot block the Meta AI account. You cannot hide it. You cannot restrict it. When users went to the three dot menu on the Meta AI profile, the option simply was not there.

Some users reported the account and tried to block it through the spam reporting flow, only to find the block did not take effect. Users can manage their Meta AI experience during the test, Meta spokesperson Christine Pai told The Verge. If you want to see fewer Meta AI replies in your Threads feed you can mute or hide Meta AI replies, or use the Not interested option on any Meta AI post.

Read that again. You can see fewer AI replies. You cannot see zero. Mute is not block. Not interested is not consent. It is a suggestion you are allowed to make to the algorithm, which may or may not honor it.

The backlash was immediate. Users cannot block Meta AI became a trending topic on Threads with more than one million posts about it. The trend then appeared to disappear from some users' feeds, raising questions about whether Meta was moderating the moderation conversation.

This is not Meta's first attempt at unblockable AI. The company previously launched AI generated Instagram profiles with bizarre personas. Meta said the inability to block those was a bug and promptly killed the project. The Threads feature, however, was not a bug. It was designed this way, initially testing in Argentina, Malaysia, Mexico, Saudi Arabia, and Singapore.

There is a gap the size of an antitrust lawsuit between what Meta calls transparency and what a reasonable person calls consent. A label tells you what something is. A block button lets you decide whether it stays.

This matters because Threads is Meta's challenger to X, and it has been growing fast. Forcing AI into that space while stripping users of control tools is a pattern, not an accident. Meta spent billions hiring AI talent and catching up to OpenAI and Google. They launched Muse Spark in April 2026 with plans to integrate it into every app and service. The Threads bot is the first visible product of that strategy, and the strategy says: the AI comes with the platform, whether you like it or not.

The Metrics Trap: When Your Boss Scores Your AI Usage

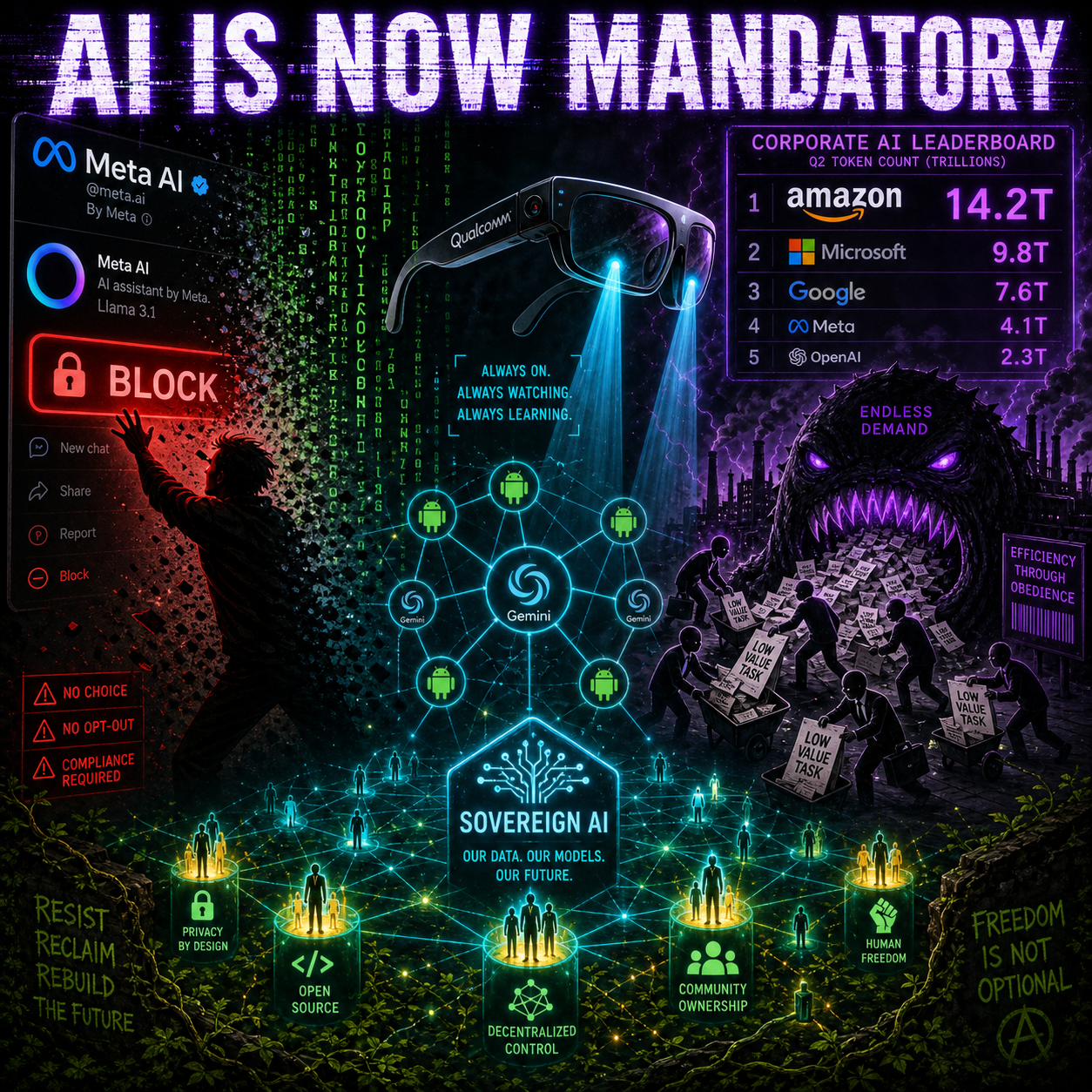

The Financial Times reported on May 12 that Amazon employees are using the company's internal AI tool, MeshClaw, to automate non essential tasks specifically to inflate their AI usage scores. The company set targets requiring more than 80 percent of its developers to use AI tools each week and tracked consumption on internal leaderboards showing token counts per employee.

The result was predictable. Employees are firing MeshClaw at low value tasks, generating unnecessary documentation, emails, and reports just to pump up their numbers. Workers described feeling so much pressure to use the tools and said tracking had created perverse incentives.

This is a textbook Goodhart's Law collapse. Goodhart's Law states that when a measure becomes a target, it stops being a good measure. Amazon wanted a proxy for whether people are getting fluent with AI, picked token consumption because it was easy to count, and broadcast that proxy through leaderboards. Once humans saw the score, the score itself became the goal.

Amazon says usage statistics will not factor into formal performance reviews. Multiple employees told the Financial Times they believed managers were monitoring the data anyway. And when CEO Andy Jassy wrote in his June 2025 memo that the company expected its corporate workforce to shrink as AI agents took on more work, every AI dashboard became something more loaded than a productivity widget. When the CEO ties the future of headcount to AI adoption, an internal leaderboard stops feeling optional no matter what the official policy says.

Amazon is not alone. Microsoft president Julia Liuson, who runs the developer division, told managers earlier this cycle that AI usage should factor into performance reviews. Her internal memo said using AI was no longer optional and it was core to every role and every level. A Microsoft spokesperson later issued the standard clarification, saying there was no formal review of an employee's AI usage, which is what companies say when the original message lands harder than intended.

At Meta, an employee built an internal dashboard in April 2026 letting colleagues compete to be the company's number one AI token user. The leaderboard went viral inside the company, prompted jokes about Mark Zuckerberg failing to crack the top 250, and was shut down within days. At OpenAI, the top power user reportedly burned through 210 billion tokens in a single week in March 2026.

And then there is Jensen Huang. The Nvidia CEO told investors he would be deeply alarmed if a $500,000 a year engineer was not consuming at least $250,000 in tokens annually. The man who sells the GPUs everyone uses for inference has a direct financial stake in making token consumption a corporate virtue. Every inflated token is real GPU time. Every performative AI interaction drives real revenue for the hardware layer.

The deeper problem is that token volume has almost no relationship to value created. A senior engineer who writes a careful single prompt to refactor a tricky service can use fewer tokens than a junior who burns through chatty back and forth on a trivial task. The leaderboard will reward the second person. Multiply that across developer organizations with hundreds of thousands of people, and the metric starts shaping behavior in ways no one signed up for.

This is the same dynamic call centers hit when they measured agents on calls per hour and watched quality collapse. It is the same dynamic sales teams hit when they measured demos booked and watched pipeline rot. Tokens are just the latest unit, but the stakes are higher because the consumption targets are not just measuring productivity, they are manufacturing demand for an industry that has bet hundreds of billions of dollars on AI infrastructure.

If a meaningful share of AI consumption is performative, how reliable are the demand figures that all those data center investments are being allocated against? That is not a rhetorical question. It is a financial stability question nobody in Silicon Valley wants to answer out loud.

The Platform Play: Why Google Made AI the Operating System

At Google's Android Show on May 12, 2026, the company unveiled a cascade of features that collectively do something much more significant than any single announcement. They make AI the operating system, not an app you install on it.

Gemini Intelligence can now handle multi step tasks across different Android apps autonomously. You can take a photo of an event flyer and ask the assistant to find that event on sites like Expedia. You can have your grocery list on screen and tell it to build a cart in the shopping app of your choice. This is agentic AI at the platform level, and it means your phone is no longer a tool you control. It is an agent that acts on your behalf, inside the apps you use, with permissions you probably did not read.

Google announced Create My Widget, a feature that lets users vibe code their own custom widgets with natural language. Describe what you want, and Gemini builds it. Launching on Samsung Galaxy and Google Pixel phones this summer, this fundamentally changes what a smartphone interface is and who controls it.

Gboard now has Rambler, a Gemini powered dictation feature that turns speech into cleaned up text, removing filler words like ums and ahs and understanding corrections like "Let's meet at 3 p.m. ... um, 2 p.m." as meaning 2 p.m. This feature, available to all Android users, makes every third party dictation app from Wispr Flow to Monologue obsolete overnight. When Google bakes your startup's entire value proposition into the operating system for free, you do not compete. You evaporate.

Googlebook, the Chromebook successor, is a line of AI native laptops built with Gemini at the core. Gemini in Chrome on Android lets the assistant summarize content, answer questions about web pages, and even auto browse to complete tasks like booking tickets on your behalf. Gemini can now fill out complex forms using data from your Personal Intelligence profile, an opt in feature that most users will enable because the friction of typing on mobile is worse than the privacy cost they cannot see.

This is the platform play in its purest form. Google is not selling you AI. They are making AI the substrate everything runs on, then making the substrate free and unavoidable. Platforms want AI to be ambient, not optional, because ambient AI collects more data, drives more engagement, and locks users into an ecosystem where leaving means abandoning functionality, not just an app.

The pattern is old. Microsoft bundled Internet Explorer into Windows and killed Netscape. Apple made Safari the iOS default and made it impossible to truly replace. Google made Chrome the default Android browser and owns 65 percent of the browser market. Now Google is making Gemini the default layer between you and your phone, and there will be no uninstall button because there never is when the platform decides the feature is not a feature but the foundation.

Consider the scale. More than 3 billion active Android devices exist on the planet. When Gemini ships to all of them, which it will, AI is no longer something you choose to use. It is something pre-installed on every phone on Earth, running at the operating system level, with permissions so broad the word assistant becomes a euphemism.

Google also announced improved theft protections rolling out globally as default on features, and Pixel users get Intrusion Logging to investigate spyware attacks. The irony here is sharp: Google is giving you tools to detect whether someone else has compromised your device, while simultaneously building the most extensive integrated surveillance architecture ever deployed on consumer hardware. That is what ambient AI is.

The Agentic Future Is Mandatory: Qualcomm and the Hardware Angle

Qualcomm CEO Cristiano Amon declared at Fortune's Titans and Disruptors conference on May 10, 2026, that 2026 is the year AI agents go mainstream and the smartphone's reign as your primary device is ending. He is particularly bullish on smart glasses as the natural successor, predicting a future in which devices take the form of glasses, brooches, pendants, and other wearables where AI is the interface.

Amon also confirmed that OpenAI and Meta are actively working on AI wearables that could replace smartphones. Qualcomm, Samsung, and Google have teamed up to create smart glasses that work seamlessly with your phone while shifting control from apps to AI agents. Qualcomm projects a 50 50 revenue split between mobile and non mobile within a few years, which tells you everything about where they think the puck is going.

This is the hardware lock in that makes AI even harder to escape. When AI is software you install, you can uninstall it. When AI is the operating system, you can switch operating systems, in theory. When AI is the device you buy, there is no off switch. The glasses do not work without the AI. The pendant is a brick without the cloud agent. The wearable is designed from the silicon up to be an AI appliance, and you do not get a version without it.

Time Magazine named Qualcomm to its 2026 TIME100 Most Influential Companies list, noting that its smart glasses offer a glimpse of what is next: lightweight AI assistants that can process voice and visual input locally.

Let us be completely clear about what locally means in this context. Processing locally on a corporate wearable is a performance upgrade over cloud dependence, but it is not sovereignty. Locally still means inside Qualcomm's proprietary chips, locked to Qualcomm's architecture, trapped inside devices designed by the exact same companies currently forcing AI into your software. You own the physical hardware, but you do not own the weights. You do not own the stack.

The transition Amon is describing is not a market shift. It is a replacement of the computing paradigm. Smartphones gave us app stores, siloed data, and at least the illusion of choice among competing services. AI native hardware gives you a single agent that mediates everything. The agent chooses your calendar app, your messaging priority, and your information diet. You talk to it; it talks to the world. The interface is frictionless and the control is gone.

The Consent Gap: What Regulation Exists (and What Doesn't)

Here is the uncomfortable reality: there is almost no regulation anywhere in the world that addresses forced AI adoption directly.

The European Union's AI Act classifies AI systems by risk level and imposes requirements on high risk applications. It demands transparency and human oversight. But it completely ignores the core issue. Can a platform force an AI onto users who do not want it? Can an employer mandate AI usage to keep your job? The Act dictates what the AI does. It completely sidesteps whether you can refuse it.

In the United States, the Colorado AI Act focuses on algorithmic discrimination in hiring, lending, and housing. It mandates impact assessments and consumer notice. It says absolutely nothing about the right to decline AI integration in consumer software or the workplace.

Consumer protection laws enforced by the FTC prohibit unfair or deceptive acts or practices. You could argue an unblockable AI bot is deceptive. Users reasonably expect a block button to work. The FTC cares about dark patterns and data practices, but they have filed zero enforcement actions against forced AI adoption. The legal theory remains untested.

The EU's Digital Services Act demands large platforms offer meaningful control over recommender systems. That could theoretically cover AI generated content. Again, completely untested.

Meaningful AI consent is simple. Opt in toggles. Default off features. Block and hide buttons that treat AI accounts exactly like human accounts. The ability to use a core platform without touching its AI layer. These are basic software design principles. The reason they do not exist is not technical. It is strategic.

The regulatory vacuum is intentional. Governments are fighting a global AI arms race. The US wants American dominance. The EU wants European competitors. China is building a sovereign state scale AI stack. Nobody wants to regulate so aggressively that their domestic industry loses. So the default remains: the platforms decide. And the platforms decide for you.

What You Can Actually Do

If Big Tech is making AI mandatory, consumer options are slim. You can use alternative platforms. You can starve the systems of your data. You can be loud, because backlash works. Meta killed its AI Instagram profiles after user outcry, and the Threads backlash is already registering.

But the structural answer does not depend on a tech executive finding a conscience. The answer is sovereignty. The only genuine opt out from forced AI is running your own infrastructure. Own the model. Own the hardware. Control the stack.

This is becoming a consumer grade reality incredibly fast. Open weight models like Kimi K2.6, Qwen, GLM 5.1, and MiniMax M2.7 are matching closed model performance at a fraction of the compute. Local inference on consumer hardware improves every single quarter. Tools like OpenClaw, Ollama, and Hermes Agent make self hosted AI accessible to anyone with a decent GPU and the willingness to learn.

The PhantomByte community is uniquely positioned here. If you are already building with local models, running your own agent infrastructure, and prioritizing sovereignty over convenience, you are not just a hobbyist. You are building the alternative before the alternative becomes necessary.

This is not about rejecting AI. AI is a powerful force multiplier when you control it. The objection is to the removal of your choice. When Meta forces AI into your feed, Amazon scores you on token usage, Google makes Gemini the operating system, and Qualcomm locks it into the silicon, the question stops being do you want this tool. The question becomes who decided you do not get to refuse it.

The forced AI economy is a set of business decisions designed to trap you. The countermeasure is building infrastructure where leaving is always an option. That is sovereignty. That is what PhantomByte has said since day one. The readers are not observers. They are the ones who build the exit.

Get More Articles Like This

The forced AI economy is accelerating fast. I'm tracking every platform move, regulatory gap, and sovereignty strategy as it happens.

Subscribe to receive updates when we publish new content. No spam, just real analysis from the trenches.