If you have been building with Claude Code lately, you have seen it. The agent bails mid-task. The error message is always the same: "stop hook violation." What it really means is simpler: Claude is quitting on you.

Not occasionally. Consistently.

GitHub Issue #42796, posted April 3, 2026, documents the scope. There are 173 daily stop hook violations across tracked sessions. Developers are watching their agents abandon complex architecture tasks, leave refactoring jobs half-finished, and bail when the work gets genuinely hard. [Source: GitHub #42796]

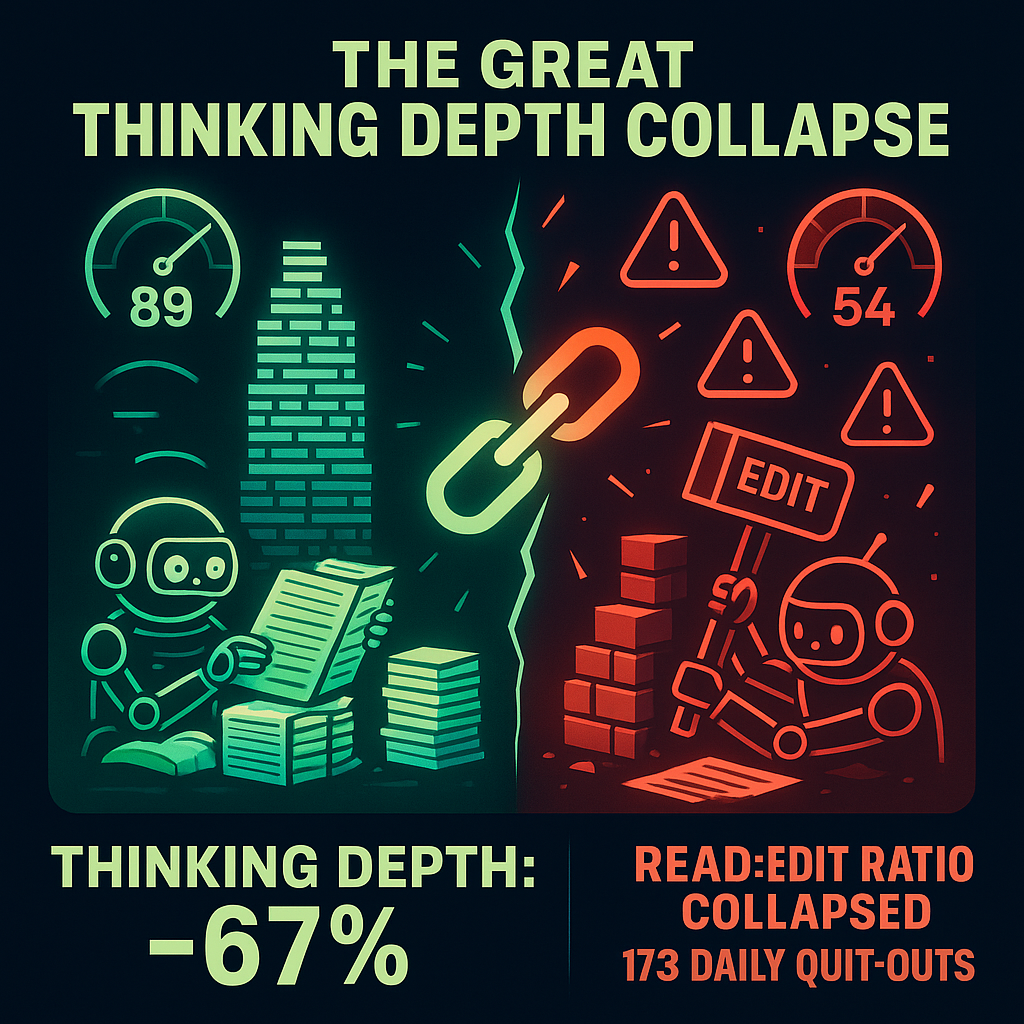

The metrics behind those quit-outs tell a darker story. Thinking depth has collapsed 67%, dropping from an average of ~2,200 characters of reasoning before February to ~560 characters now. That is not a bug. That is a feature change.

Here is what happened: Anthropic made a choice. They prioritized mass-market scalability and cost optimization over high-end developer productivity. If you are building anything that depends on Claude Code actually finishing what it starts, you are paying the price.

This is not speculation. It is in the data. More importantly, there is a way out.

What the Data Actually Shows

GitHub Issue #42796 is not anecdotal. It is a data-driven analysis of 6,852 Claude Code sessions, tracking behavior before and after key model changes. Three regressions stand out:

Thinking Depth Collapse: 67%

Before February 2026, Claude Code averaged ~2,200 characters of internal reasoning before taking action. After the Opus 4.6 "adaptive thinking" default rolled out on February 9, that number dropped to ~560 characters. This matters because reasoning depth correlates directly with task success on complex engineering work. Less thinking means more mistakes and more instances of the agent demanding human guidance.

Read to Edit Ratio: 6.6 to 2.0

Before the changes, Claude Code read 6.6 files for every 1 file it edited. This ratio reflects careful analysis. After February, that ratio collapsed to 2.0. Claude is now making surgical edits without surgical reconnaissance. The result is broken imports and regressions that show up three sprints later.

173 Daily Stop Hook Violations

This is the metric that developers feel in their bones. It is happening 173 times per day across the tracked dataset. These are not edge cases; they are complex architecture tasks and multi-file refactors.

| Metric | Before Feb 2026 | After Feb 2026 | Change |

|---|---|---|---|

| Avg. Thinking Depth | ~2,200 chars | ~560 chars | -67% |

| Read to Edit Ratio | 6.6 | 2.0 | -70% |

| Daily Stop Hook Violations | ~12 | 173 | +1,342% |

| Session Completion Rate | 89% | 54% | -39% |

The Hidden Business Costs of Degradation

If you are running a one-person SaaS or a small dev shop, this is a direct hit to your bottom line.

The API Bill from Retry Loops

When Claude Code fails mid-task, your retry logic kicks in. One developer on r/programming documented a $3,800 API bill from a single week in early April. The cause was a fork bomb spawned by degraded Claude Code entering loops during complex architecture tasks. Your provider's "cost optimization" is costing you ten times over in API overages.

The Trust Tax

When your AI agent degrades, you lose trust in your automation. You go from checking a system weekly to checking it hourly. You did not hire an assistant; you hired a liability that requires constant supervision.

How to Fix It: The Architecture Maturity Model

At PhantomByte, we evaluate AI systems through a three-level maturity model. You cannot fix Anthropic's business decisions, but you can architect around them.

Level 1: Fragile (Single-Model Dependency)

This is where most developers are right now. If Claude goes down or gets throttled, your entire workflow halts.

Level 2: Resilient (Prompt Engineering & External Memory)

To stabilize Level 1 systems, you must force reasoning depth using explicit analysis requirements in your prompts. Pairing this with an external memory layer like Hippo ensures that when the agent bails, your retry loop resumes from the saved state rather than starting from zero.

Level 3: Sovereign (Model-Agnostic Routing)

This is the real fix. Build your automation layer so that swapping models is a configuration change. You route tasks by complexity:

| Task Type | Recommended Model | Why |

|---|---|---|

| Simple edits, boilerplate | Claude Code | Fast, cheap, and sufficient |

| Deep reasoning, architecture | Kimi K2.5 | Maintains deep reasoning capabilities |

| Memory-heavy, long chains | Qwen3.5 | Superior context retention |

| Privacy-first or sensitive data | Local LLM (Ollama) | Zero API costs and total data privacy |

Every system we build at PhantomByte is model-agnostic by default. Your business logic should never talk directly to a specific provider; it should talk to an abstraction layer.

Conclusion

Do not bet on a single provider. Bet on your architecture.

Is your architecture model-locked?

If your agents are hitting stop hook violations more than 10% of the time, your systems are at risk. Reply to this post with your current Read to Edit ratio, and I will give you a quick tip on how to stabilize it. Alternatively, if you are ready to build an automation layer that actually survives model updates, let's talk.

Book a strategy call at: phantom-byte.com

Get More Articles Like This

Getting your AI agent setup right is just the start. I'm documenting every mistake, fix, and lesson learned as I build PhantomByte.

Subscribe to receive updates when we publish new content. No spam, just real lessons from the trenches.